Harvard:Biophysics 101/2009/Project

Closing Days Update // Zachary Frankel

In case people are not looking at other pages I wanted to post an update from the modeling group here. We have completed an implementation of a logistic regression in python - over the next few days we aim to integrate this with a dataset from the biology team and hook it up to some interface from infrastructure. We will also document other possible models etc...

Update from Biology Group

- We've come up with an easy way to identify SNPs, associated literature, and population diversity data drawing upon HapMap resources.

The full genomic sequence of these individuals are difficult to obtain.

- We are writing a proposal in order to get the full genomic sequences of individuals in iris pigmentation study.

- We view this tool as something people can use not only to look at their own genomes. Being able to search from traits firsts allows people to possibly use this tool for identification.

- criminal checking

- It would be interesting to look into a 3d generation of a person from a list of known traits.

- dating related (unlike snp cupid in that you put in traits you are looking for and it matches you up)

Update from Modeling and Questions // Zachary Frankel

I wanted to update everyone on part of the modeling group. We are doing a couple things

- Recreating some models from the literature including the logistic regressions (there are other methods brought up in the paper from Ridhi). One of the issues with these is they tend to not assume quantified traits in the way we do, and they also require very large datasets (at least 3 orders of magnitude) though this is not a reason to exclude them.

- Generalizing the two gene model. This has involved a couple refinements. In particular simplification of the non-linear regression by constraining certain parameters. For example, when considering our [math]\displaystyle{ \delta }[/math] terms we should not allow a regression of all possible coefficients but instead only consider a binary of dominance or non-dominance. This programming will likely require tinkering with any non-linear regressions already programmed into mathematica etc... We will try implementing this on pythong

We would also like to check in with other groups on anything they directly want to see from us in the immediate future. Please reply to this post with your vision of what you'd like from the modeling group. In turn, what we'd really like to develop are a test case with actual data, rather than just a list of SNPs associated with something, having actual data on which to run the model. Second, we would like to interface with traitomatic, even if just on one trait as a proof of principle - we will coordinate with the infrastructure team on this.

===Privacy and Protection=== // Mindy Shi

I think this is the privacy paper Prof. Church mentioned: Homer et. al. 2008. I also found this interesting paper by P3G, Prof. Church and others. Public Access to Genome-Wide Data: Five Views on Balancing Research with Privacy and Protection.

SNP interaction Model// Alex L

As Joe pointed out, there already exists a certain amount of literature focused on logistic regression. The following paper, for instance, proposes a model which might be of use to us: | Logistic Regression Model.

Wishlist/Progress Report from Today // ZMF

Progress Classification (eyecolor) Non-linear Interaction Noise Lables

Wishlist

Test Cases

Focus List

Traitomatic

Bugs (SNP, Deletions)

Model ←→ Test Case

How much Data?

Mathematica/Matlab

Height → Realistic Data

> 2 Genes

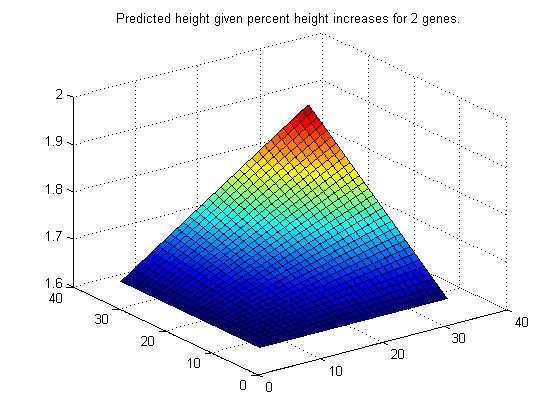

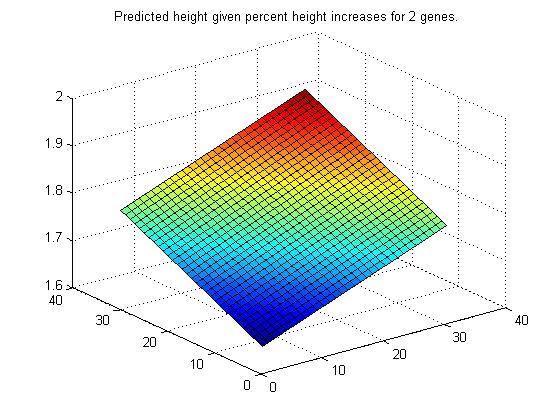

Scripts to Model a 2-Gene Interaction /Ben Leibowicz

I have written a set of scripts in MATLAB to create a simple model of a 2-gene interaction. An appropriate example to be handled by this model is the case of an individual whose Trait-O-Matic output includes 2 genes related to height, in the form of predicted percent increases in height for the individual. The question is - how do these two effects combine into a single effect on height? Might the combined effect be additive? Multiplicative? Some combination? The modeling group came up with a general parametrized model that includes several types of interactions. My scripts essentially take data (in this early stage test data that I made up) in the form of values of the two predicted one-gene effects and value of the trait for each individual, and through a nonlinear regression routine determines the best-fit parameters of the model. So, in the height example, the test data consists of the one-gene height increase effect for two genes and the corresponding height of that individual, and the resulting model predicts the height of an individual given his two one-gene effects.

To demonstrate that the model works well, I created test data that represent a strongly additive case and test data that represents a strongly multiplicative case.

Multiplicative

Additive

This is admittedly a very simplified version of gene interactions, but I believe that now with this framework in place it should not be too difficult to generalize this model to include the effects of more than 2 genes, handle different types of input (like absolute instead of percent effects), and possibly include new types of interactions with new parameters.

Excerpt of notes from biology group meeting // ZMF, AR,JN,RT

Below are some notes I typed up from the meeting of the Biology group today. Our discussion yielded three ‘models’ to reverse engineer datasets based on various observations/readings. These are by no means meant to be exhaustive, but they should provide a good starting point for toy datasets the model needs to be able to deal with. We broke these down into three initial groups

1. Complete Epistasis/Dominance - in various settings, one gene completely dominates (epistatically) the other. For example, baldness is completely epistatic to whether or not one has widow’s peak. Certainly, this type of epistasis should be recognized by the model. As such we have the following model for eye pigment. Consider genes x, y, x being whether or not you have eyes, and y being a pigment score between 0 and 1. Ie. x = {0, 1}, y \in (0,1). We claim the relationship between these two in determining your trais is x * y. That is if x is 0 and you have no eyes, no matter what you have no eyes, whereas if x is 1 you get whatever score y gives you. For simplicity (in consultation with the math team) we would only consider 10 discrete eye colors, at .1 through 1.0.

2. Multiplicative effects – here we consider an additional gene for eyecolor, let us say ‘z’ – which can divide the amount of pigment one has. In particular it assumes values, 1, .5, .3333, or .25 – and the relationship is x*z – in other words it must recognize the same relationship as 1 but not when one of the values can be zero.

3. Additive effects – Here we consider an effect which is completely additive. The example we decided on was inspired by the paper posted by Prof. Church on height. We wish to consider genes a and b which regulate height. For simplicity consider a and b are \in (-10,10) and each map to the number of inches above or below the mean height for your group. Let us say though that a is linked to leg length and b is linked to torso size. So their effects are combined and we wish to find the relationship a+b as the final trait determinant.

Clearly there is more work to be done, but we want to make sure the modeling is cognizant of being able to do these and can demonstrate their success on reverse engineered data.

Infrastructure Background

Here's the infrastructure page!

Request from Thu Nov 5 Class: Paper on human quantitative trait example | on height.

Discussion

Epistasis article and class notes// ZMF

Here is the article on epistasis mentioned in class today. It provides a terrific overview of epistasis. Also here are notes from today's class if anyone wants to review for inspiration.

Nov 2. Meeting Report // Alex L

Last night, Hossein, Ben, Zach and I met in Quincy to discuss the project. In today's class, we will present the project plan we came up with, and will propose the adoption of a common language in which to discuss the project. Provided that the group agrees with the framework, coding could start as soon as today!

Here's that overview of the structure of what resources we have from trait-o-matic: File:Trait-o-matic overview.pdf. Keep in mind that this is a work in progress and I will update it once I gain more and more of an understanding of how things work. Feel free to ask specific questions, and keep in mind any additional data we might want to use for this project. - FZ

Hey! We created a user page for discussion and ideas: Biology Aspect of Project(AR,AT,JN,RT)

Diagram of project/groups // ZMF

Here is the diagram from today's class - Zach

Zach Frankel

Jobs I think need to be done:

- High level work on finding ways to organize data on polygenic inheritance

- Organizing Database of multifactorial inheritance

- Describing analytic method to evaluate inhe

- Learning trait-o-matic code and structure

- Integrating code to include analysis of polygenic traits

Possible additional work/applications

- SNPCupid type analytic tool add-on

- Pharmacogenetic tool add-on

The role I would like to take is on the interface of designing an algorithm to understand polygenic inheritance etc... and integrating this into the program. However, I would like to see how others imagine working and work in a cooperative framework.

===Development process===

- Step 1: make sure my combination is useful. To do this, I'll need to learn about the nature of uncertainty in gene combination expression. I'd love to find a book or paper on this or something. This will help me determine three things: 1) whether correlative predictions will be valuable to gene combination research at all; 2) if it is useful, what data is needed; and 3) whether the PGP does/is able to collect this data from its participants. Ideally I'd do this in the next couple weeks. ===Checkpoint===: At this point, I'll come back to the class and get feedback about whether the rest of my project is worth pursuing.

- Step 2: Identify prototypical examples of gene combinations with <100% certainty that could be tested.

- Step 3: Create new variation of Trait-O-Matic that scans PGP participants and outputs "X percent of PGP participants have gene A"

- Step 4: Extend this tool to link with the observational data, if possible. If not, figure out what else to produce as a final product.

- Why I want to do it: I am motivated much more by long term impact than any of the other drivers we've talked about. High level obsevation: I am entering this project with the goal of: "Assume in 2012 the PGP has 100,000 genomes. What can I do now to make this tool as robust as possible?" I am slightly disappointed that we aren't taking this view with the project at large, but that is the question I am trying to answer with my contribution. If anybody has further suggestions for me, please let me know!

- I think that two tools will be crucial for the PGP in the long run: 1) a researcher-facing API; and 2) a mechanism for continuous, dynamic data collection from participants. I think my project could go a long way to framing how these tools will work. If nothing else, my final project could be a report of "here is what I've learned in our class project about an API and data collection need to be improved in the PGP." In my experience with software development, this kind of experience can be really valuable.

Following up on Tues Class// George Church 10-28-09

- Gene-Gene interactions. Example of combining 9 SNPs: Genome-wide association of early-onset myocardial infarction with single nucleotide polymorphisms and copy number variants.

- Examples of Nutrigenomics: It's Not Just Your Genes!.

- A General text: An Introduction to Genetic Analysis by Griffiths et al.

Notes From the Other Day // Zach Frankel 10-26-09

Check out the semi-organized notes from last Thursday. Sorry for not getting these up earlier.

Further comments // Alex L 10-24-09

After the discussion many of us had on Thursday in Quincy, it seems like we're definitely leaning towards the SNPCupid project, provided that we can find a better name for it :) Joe already pointed out its advantages, so I won't go over them again.

In addition, there is no reason why we wouldn't integrate some of the other ideas (concerning metabolism, for instance) as extra features of the software: it seems like once we have the basic framework, it would be fairly doable, and indeed preferable, to add as much functionality as possible.

And so this leads me to my main point: the semester is already halfway done and we really need to start working on the project!!! I think it's best to develop it leisurely and in small increments, allowing us to reflect on the project and improve it as we actually create it, rather than rush to finish it in a week. Again, the sooner we start, the more features we could implement in it; and a richer feature set is what's needed to take the project to the next level.

Best,

Alex

Tonight's Discussion // JT 10-22-09

Thanks again to Zach for booking the room in Quincy - the meeting was fun and productive, and I feel like a lot of ground was covered. Zach has said he'll post notes from the meeting - until then, just a recap on my own conclusions and thoughts going forward:

- The consensus seems to be that we should move forward on SNPCupid, which I'm happy to do. It's attractive because it's: (1) do-able, (2) not obviously being done already, (3) would afford opportunities for each of us to use our unique talents (biological, computational, etc.), (4) is something "real people," rather than just researchers, could use and find valuable.

- That said, I'm eager to hear alternative project proposals and debate their merits. Like I said, SNPCupid has some desirable traits, but it's certainly less "sexy" than other projects we debated (metabolic or other networks, genealogy-type analyses, etc.)

- In any case, and to avoid this dragging on indefinitely, I think we ought to put all possible projects to a vote at some point in the coming week or two. As someone pointed out, we need to start working on some project or we'll run out of time!

Today was fun, let's hope there are more like it-

Best,

Joe

Other thought about metabolism project // ZMF 10-21-09

One thing I want to make sure we consider is making sure we are sufficiently ambitious to not be doing work already done. In particular, I have been trying to review some of the literature on the application of pharmacogenomics to drug dosing etc... I think there are a couple paths we might want to consider

- Effects of multiple genes: According to the conclusion of one of the reviews I found this: " Inherited difference in a single gene has such a profound effect on the pharmacokinetics or pharmacodynamics of a drug that interindividual difference in one gene has a clinically important effect on drug response. These are the “low-hanging fruit” of pharmacogenetics. However, the effects of most drugs are determined by many proteins, and composite genetic polymorphisms in multiple genes coupled with nongenetic factors will be found to determine drug response." Finding a novel way to statistically guess at the polygenetic factors might be interesting. We might even consider developing the groundwork for a method that we could implement once we have more genomes sequenced.

- Aggregating information that might already exist and making it into a usable tool. This is not quite as ambitious but if other research has already compiled 'low hanging fruits' perhaps we could apply those. This seems to be the direction we have largely talked about, but I think making the distinction is important

Class Project Topics:

Response to JT // ZMF 10-21-09

Joe, I think you raise a valid point about the limits of a metabolism based project. While you are certainly right that it would be hard to predict how arbitrary alleles influence certain pathways, there are certainly some pathways we understand quite well. Though your example of glycolisis emphasizes that even very well studied pathways elude complete understanding, I think there is a threshhold of knowing enough. In particular, if we know enough to even suggest genes for further research, the project has done something useful. Ie. if we identify an SNP as affecting a pathway which we think influences Codeine metabolism and then we find the allele in actual humans correlates with being an ultra-rapid codeine metaboliser, we are onto something. I think it would be a bit over-ambitious to assume any project could be completely diagnostic in nature - that is to say, without looking at the statistical effect of an allele in humans, we can only make suggestions. Nonetheless, I think these suggestions a) are useful in and of themselves and b) once more data is available on people, their phenotypes(in particular their metabolic phenotypes), and c) their genomes - the project could be a very useful tool. Admittedly, this is thinking ahead, but I think that's what we should be doing. Also, I just went through this paper and thought it was both interesting and relevant to the project. It presents a case study of a way to look for the role of SNPs in pharmacogenetic pathways - I'll post more on this soon. Cheers,

Zach

Do We Understand Glycolysis? // JT 10-20-09

While in principle I like the idea of a metabolism-based project, I'd like to inject some skepticism. Here's a nice paper on yeast glycolysis: File:Glycolysis.pdf. Even if the methods are a bit foreign to some of you, I think the intro and conclusions can suggest some of the problems in a metabolism-based project. So a few concerns:

- In the absence of high-quality systems-level models of all the metabolic pathways in humans (which we do not have), how can we say something meaningful about the role of individual human alleles in such a pathway?

- Ought we to assume such a model will exist soon (my guess is the $1000 genome will happen first!) and be useful, or is it easier to simply connect known human alleles to their observed phenotypes, without resorting to complex metabolic models?

There seems to be broad agreement that whatever project we work on should be forward-thinking, but still plausible and potentially useful. My concerns are not meant to be criticisms - but I do hope they'll generate some debate over which potential project are plausible and which are perhaps *too* forward-thinking.

'til Thursday-

JT

SNPCupid // Anna Turetsky & Joe Torella

We decided to build on Anna's project idea for a genetic testing service which would allow couples to assess, before having children, what phenotypes those children might inherit (and with what probability). These phenotypes range from the medically relevant (i-cell disease, diabetes) to the cosmetic (male pattern baldness, eye color), to the beneficial (intelligence, athletic ability).

- A starting point for this kind of work is to cross-reference the SNPedia, which catalogs easily/cheaply-testable human SNPs associated with some phenotype, and OMIM, which contains a wealth of information on the heritability of genes associated with those SNPs. GeneTests[1] can also be used to gather up-to-date information about medically relevant genetic tests currently able to be offered. The program would focus mostly on recessive inheritance patterns, with X-linked recessive traits providing an additional layer of complexity. In addition, due to the non-standard formatting of heritability information in OMIM, parsing the data in a systematic way would provide an interesting (and I think "do-able") challenge.

- Check out our talk page for an example of this idea using male-pattern baldness, and for a discussion of how something like this might be implemented in a systematic way.

In Response to SNPCupid //Ben Leibowicz

Your idea of a genetically oriented "dating service" sparked my interest in our last class meeting and I think it's a good jumping-off point. It also seems like something we could do given what we have, which is really just computers and access to some existing genomes. I wrote a bit more about this idea in my talk page and I'm curious whether you think such an idea is a significant enough departure from our current situation to be considered Human 2.0, as what it would be doing is simply to allow a group of individuals to manipulate human evolution in a seemingly positive way through advanced information collection and processing. Doesn't this beg the question: should we instead be focusing on technologies that will allow us to manipulate the genome of an offspring in a way that prevents inheritance of genetic disorders where it would naturally occur? This seems to be realistic in the not-so-distant future and might make such a genetic dating service obsolete. What do you think?

JT

I should say first that I'm not really behind the idea of this as some sort of "dating service" (although, admittedly, the project name implies it pretty strongly). I think it's more appropriate to think of this as a tool for inferring F1 phenotypes from very complex parental genotypes, in human populations. At any rate, there's a lot here, so I'll just focus on two questions: (1) is this enough of a departure for "human 2.0," and (2) shouldn't we be focused on interventions rather than informatics? Here are my answers:

- If we consider the cloud of ideas that has gone around regarding human 2.0, they are primarily concepts in which some human ability is enhanced by virtue of greater information. Personalized medicine is something we think of as obviously "human 2.0," but it is not fundamentally different from present-day medicine; it simply updates modern medical treatment with personal (genetic) information. Similarly, we find romantic partners largely through instinct, and it seems logical that part of "human 2.0" would be to incorporate the new information we have (again, genetic) into partner-finding decisions - and that's the idea here. In conclusion, since we think of personalized medicine as "human 2.0," I think it is fair to consider this "SNPCupid" idea similarly "human 2.0" in character.

- Your second question is whether we should focus on interventions, rather than informatics. But I feel that question sets up a false dichotomy; I'm not sure the boundary between informatics and intervention is so great. For instance, some countries are beginning to approve preimplantation genetic screening for in vitro fertilization, to avoid undesirable medical problems: Spain allows preimplantation genetic screening for cancer. Basically, they used in vitro fertilization to produce fertilized embryos, and implanted only those not carrying a gene greatly increasing the risk of breast and ovarian cancer. Since reliable genetic manipulation of human embryos is (seemingly) a long way off, such in vitro selection methods are the best way of avoiding or encouraging the inheritance of certain traits. In order to do this, however, we require knowledge of what traits the child is likely to inherit, and ways of testing for it, before we can rationally select which embryos are "best" (and while I'm aware this drifts into that dark and stormy 'eugenics' category, I think most parents would jump at the chance to prevent their child from inheriting an 80% probability of breast cancer!).

Medicine 2.0 // Filip Zembowicz, Zach Frankel, Alex Ratner, Alex Lupsasca, Ben Leibowicz

- We talked about making Fil's idea for a drug metabolism tool

- See especially the flow chart on Fil's page, as well as our talk pages ( Alex's, Zach's, Alex L's)

More on Medicine 2.0 Jackie Nkeube and Brett Thomas

We also really liked the idea of a drug metabolism tool. We had some additional ideas we wanted to add:

- We made a high level design of the research implications of such a tool here. We identified differences in CYP540 expression across populations as an area that data from Filip's tool could really advance. Factors to consider include race, gender, age, any others? (Brett wonders if diet and activity are relevant too)

- In general, we think that any project like this should be designed with research implications in mind, as the success of a personalized drug recommendation engine depends on the underlying research.

- On that note, we think that the drug metabolism tool should strive to be self learning, which would have major implications to the underlying architecture. The way it could be self learning is to track user observations about their responses to drugs. This would require some sort of feedback mechanism for users to optionally tell us how the dosage worked.

- There are some websites that provide drug interaction services - maybe we can experiment with an addon to one of them instead of reinventing the wheel.

=== Some Data to play around with Filip Zembowicz 12:01, 13 October 2009 (EDT) ===:

- I've taken the metabolic pathway data from under COMPOUND and REACTION the KEGG database ([[2]]) and scraped it into a mySQL database. The following two excel files hold the majority of the compounds involved in biosynthesis and metabolism, along with a listing of the particular biochemical reactions that the compounds take part in. In addition, there is a file that lists all of the reactions, enumerating the compounds that are substrates and products.

- Right now the schemata for these databases are quite primitive, I hope to in the next few days combine the REACTION data together with the ENZYME database, so that we can see what particular enzymes are active in a particular pathway, and from there we can actually start looking for mutations in those enzymes' genetic information for a particular genome. I might consider using some of the other approaches to accessing the KEGG data as well. I'll also put up a visualization of this data, once I get my database internet-accessible again, since right now I am just hosting it on my own computer.

- There is also a KEGG DRUG database that has information about drugs approved in Japan, the US, and Europe, including interesting things such as which enzymes are the targets of particular drugs

- Do explore KEGG -- it has a lot of interesting data!

- File:Compounds.xls

- File:Reaction.xls

Final Report Summary

Harvard Biophysics 101: Computational Biology Fall 2009

Expansion of Trait-o-Matic

Introduction

Both the Human Genome Project and the advent of affordable, wide-scale computer technology occurred in the same time span at the end of the millennium. Therefore, the next logical step was to apply the rapidly-evolving field of computer science to the new, enormous amount of information obtained about the human genetic sequence. This correlated rise of two normally disjoint fields allowed researchers to explore mathematical possibilities not available from wet-lab experiments alone.

Furthermore, use of the Internet has had a profound impact on society in general; the same is true for biomedical research. Online databases put an enormous amount of raw information directly under researchers’ fingertips, and Web tools like Trait-o-Matic <http://snp.med.harvard.edu/> have evolved to allow researchers to search for links between genotypes and phenotypes with a single algorithm. Trait-o-Matic was first envisioned and implemented as part of a Harvard undergraduate course on computational biology; the current (Fall 2009) incarnation of the class hoped to expand the utilities of Trait-o-Matic as a term project. The overarching aim was to both further modularize Trait-o-Matic with the long-term goal of allowing the algorithm to handle polygenic inheritance.

Methods and Objectives

For these purposes, the class was split into three teams. Although each subgroup was autonomous, overlap between groups, joint meetings, and multiple memberships were far from uncommon. The main goals and accomplishments of each group are listed below.

Biology

The primary objective of the biology group was to provide the scientific background for expanding Trait-o-Matic and to serve as a link between class aspirations and real-world possibilities. The first part of their role was to conduct an exhaustive literature search to find potential examples of epistatic interactions in polygenic traits. After encountering difficulties obtaining authentic data from the Rotterdam study associated with a paper on eye color and gene influence on phenotype (1), the Biology group generated three model data sets for use by the other groups as a concrete development tool. Each set consisted of a matrix detailing the presence or absence of either heterozygous, homozygous-dominant, or homozygous-recessive single nucleotide polymorphisms associated with eye color, with 1’s indicating a success and 0’s indicating that the particular combination was not present. Mathematical relations between matrix values were then used to calculate whether the “subject” in the data set would have blue, brown, or intermediate eye color. The following link contains full information for the Biology group: <http://openwetware.org/wiki/User:The_Biology_Group> Members: Ridhi Tariyal, Jackie Nkuebe, Anugraha Raman, Anna Turetsky

Modeling

The Modeling group, on the other hand, was tasked with relating information taken from the Biology group’s efforts and producing a mathematical algorithm to predict phenotypic response. For this purpose, genetic expression was divided into three main categories: continuous, discrete, and binary. Continuous refers to a trait where the phenotype can take on any value within a certain range, with height as a primary example. Discrete corresponds to where there are several independent possibilities, like eye color. Finally, binary traits only have two possibilities, like the inclusion/exclusion of a certain disease. Both logistic and non-linear regression models were developed to predict phenotypic responses. The logistic regression model was implemented in Python for binary traits and had some success. Therefore, it became the primary model because of its expandability from binary to continuous traits and its higher computational efficiency. Artificial neural networks were also explored as a future way to implement the model, but time and resource constraints made them physically impossible to use during the time period of this project. Future projects include using neural networks and designing the software to be used with large-scale, genuine data sets. <http://openwetware.org/wiki/Harvard:Biophysics_101/2009:Modeling>

Members: Ben Leibowicz, Zach Frankel, Alex Lupsasca, Joe Torella, Azari Hossein

Infrastructure

The job of infrastructure was to bring the technical expertise to the project and to implement everything on the Trait-o-Matic framework. Several freelogy accounts were obtained to enable Trait-o-Matic modification and testing, most notably filip.freelogy.org. Time was spent in familiarization with the tool’s coding schematic in Codelgniter and learning how to integrate new implementations with the existing software. Besides technical improvements, the main achievement was developing a tool to take models and incorporate them into Trait-o-Matic. These models can either be based on the work of the Modeling group or can be taken from an individual paper; examples of these were developed and uploaded to the site. We are optimistic about the future of this project and further modularizing and expanding Trait-o-Matic. The group’s website is included in the following link: <http://openwetware.org/wiki/Harvard:Biophysics_101/2009/Infrastructure>

Members: Filip Zembowicz, Alex Ratner, Brett Thomas, Kelly Brock, Mindy Shi

Results and Conclusion

Overall, this class has made definite strides towards our overall goal of enabling Trait-o-Matic to model multigenic effects. Using data and information from the Biology group, the Modeling group developed a simplified algorithm to predict certain phenotypes and the Infrastructure group added this functionality to Trait-o-Matic. The different subgroups did an excellent job of bridging different fields of expertise, ultimately coming together to deliver a final product. However, it must be understood that this project is still in its infancy. We have a great deal of room to expand with an enormous “wish-list” task list ranging from implementing a neural-network model to modularizing our product enough so that it will take different types of genotypic indicators outside of rsid’s. In the future, we hope to continue our work and utilize the new biological and technical advantages that the future will undoubtedly bring – perhaps even the full implementation of Human 2.0.

Literature Cited

1. Liu, Fan et al. “Eye Color and the Prediction of Complex Phenotypes from Genotypes.” Current Biology Vol 19 No 5 R192.

Interesting Links:

- Genome Commons provides some good resources about interpretation of human genome variations.

- Interesting paper on Reconstruction of Human Metabolic Network which may be helpful for the pharmacogenomics project idea. Harriswang 20:20, 8 October 2009 (EDT)