BIO254:Biological Neural Network

Biological neural network

From Wikipedia, the free encyclopedia

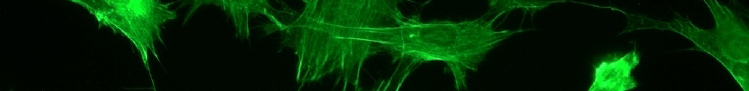

In neuroscience, a neural network is a bit of conceptual juggernaut: the conceptual transition from neuroanatomy, a rigorously descriptive discipline of observed structure, to the designation of the parameters delimiting a 'network' can be problematic. In outline a neural network describes a population of physically interconnected neurons or a group of disparate neurons whose inputs or signalling targets define a recognizable circuit. Communication between neurons often involves an electrochemical process. The interface through which they interact with surrounding neurons usually consists of several dendrites (input connections), which are connected via synapses to other neurons, and one axon (output connection). If the sum of the input signals surpasses a certain threshold, the neuron sends an action potential (AP) at the axon hillock and transmits this electrical signal along the axon.

In contrast, a neuronal circuit is a functional entity of interconnected neurons that influence each other (similar to a control loop in cybernetics).

Early study

(see also: history of Connectionism)

Early treatments of neural networks can be found in Herbert Spencer's Principles of Psychology, 3rd edition (1872), Theodore Meynert's Psychiatry (1884), William James' Principles of Psychology (1890), and Sigmund Freud's Project for a Scientific Psychology (composed 1895). The first rule of neuronal learning was described by Hebb in 1949, Hebbian learning. Thus, Hebbian pairing of pre- and postsynaptic activity can substantially alter the dynamic characteristics of the synaptic connection and therefore facilitate or inhibit signal transmission. The neuroscientists Warren Sturgis McCulloch and Walter Pitts published the first works on the processing of neural networks called "What the frog's eye tells to the frog's brain." They showed theoretically that networks of artificial neurons could implement logical, arithmetic, and symbolic functions. Simplified models of biological neurons were set up, now usually called perceptrons or artificial neurons. These simple models accounted for neural summation, i.e. potentials at the post synaptic membrane will summate in the cell body. Later models also provided for excitatory and inhibitory synaptic transmission. [edit]

Connections between neurons

(see also: synapse)

The connections between neurons are much more complex than that what is implemented in neural computing architectures. The basic kinds of connections between neurons are chemical synapses and electrical gap junctions. One principle by which neurons work is neural summation, i.e. potentials at the post synaptic membrane will sum up in the cell body. If the depolarization of the neuron at the axon goes above threshold an action potential will occur that travels down the axon to the terminal endings to transmit a signal to other neurons. Excitatory and inhibitory synaptic transmission is realized mostly by inhibitory postsynaptic potentials and excitatory postsynaptic potentials.

On the electrophysiological level, there are various phenomena which alter the response characteristics of individual synapses (called synaptic plasticity) and individual neurons (intrinsic plasticity). These are often divided into shortterm plasticity and longterm plasticity. Longterm synaptic plasticity is often contended to be the most likely memory substrate (see Synaptic weight based memory). Usually the term "plasticity" refers to changes in the brain that are caused by activity or experience.

Connections display temporal and spatial characteristics. Temporal characteristics refer to the continuously modified activity-dependent efficacy of synaptic transmission, called spike dependent synaptic plasticity. It has been observed in several studies that the synaptic efficacy of this transmission can undergo shortterm increase (called facilitation) or decrease (depression) according to the activity of the presynaptic neuron. The induction of long-term changes in synaptic efficacy, by long-term potentiation (LTP) or depression (LTD), depends strongly on the relative timing of the onset of the EPSP generated by the pre-synaptic AP, and the post-synaptic action potential. LTP is induced by a series of action potentials which cause a variety of biochemical responses. Eventually the reactions cause the insertion of new receptors into the cellular membrane of the dendrites, or serve to increase the efficacy of the receptors through phosphorylation.

Backpropagating APs are impossible because after an action potential travels down a given segment of the axon, the voltage gated sodium channels' (Na+ channels) m gate becomes closed, thus blocking any transient opening of the h gate from causing a change in the intracellular [Na+], and hence preventing the generation of an action potential back towards the cell body.

A neuron in the brain requires a single impulse to a neuromuscular junction to fire for the contraction of the postsynaptic muscle cell. In the spinal cord, however at least 75 afferent neurons are required to produce firing. This picture is further complicated by variation in time constant between neurons, as some cells can experience their EPSPs over a wider period of time than others.

While in synapses in the developing brain synaptic depression has been particularly widely observed it has been speculated that it changes to facilitation in adult brains. [edit]

Representations in neural networks

A receptive field is a small region within the entire visual field. Any given neuron only responds to a subset of stimuli within its receptive field. This property is called tuning. As for Vision, in the earlier visual areas, neurons have simpler tuning. For example, a neuron in V1 may fire to any vertical stimulus in its receptive field. In the higher visual areas, neurons have complex tuning. For example, in the fusiform gyrus, a neuron may only fire when a certain face appears in its receptive field. It is also known that many parts of the brain generate patterns of electrical activity that correspond closely to the layout of the retinal image (this is known as retinotopy). It seems further that imagery that originates from the senses and internally generated imagery may have a shared ontology at higher levels of cortical processing (see e.g. Language of thought). About many parts of the brain some characterization has been made as to what tasks are correlated with its activity. (see list of Brain regions)

In the brain, memories are very likely represented by patterns of activation amongst networks of neurons. However, how these representations are formed, retrieved and reach conscious awareness is not completely understood. Cognitive processes that characterize human intelligence are mainly ascribed to the emergent properties of complex dynamic characteristics in the complex systems that constitute neural networks. Therefore, the study and modeling of these networks have attracted broad interest under different paradigms and many different theories have been formulated to explain various aspects of their behavior. One of these -- and the subject of several theories -- is considered a special property of a neural network: the ability to learn complex patterns. [edit]

Philosophical issues

(see also: Philosophy of perception)

Today most researchers believe in representations of some kind (representationalism) or, more general, in particular mental states (cognitivism). Thus, perception is information processing which is used to transfer information from the world into the brain/mind where it is further processed and related to other information (cognitive processes). Few others envisage a direct path back into the external world in the form of action (radical behaviourism).

Another issue, called Binding problem, relates to the question of how the activity of more or less distinct populations of neurons dealing with different aspects of perception are combined to form a unified perceptual experience and have qualia. [edit]

Study methods

(see Neuropsychology and Cognitive neuropsychology)

Different neuroimaging techniques have been developed to investigate in the activity of neural networks. The use of 'brain scanners' or functional neuroimaging to investigate the structure or function of the brain is common, either as simply a way of better assessing brain injury with high resolution pictures, or by examining the relative activations of different brain areas. Such technologies may include fMRI (functional Magnetic Resonance Imaging), PET (Positron Emission Tomography) and CAT (Computed axial tomography). Functional neuroimaging uses specific brain imaging technologies to takes scans from the brain, usually when a person is doing a particular task, in an attempt to understand how the activation of particular brain areas is related to the task. In functional neuroimaging, especially Functional Magnetic Resonance Imaging (fMRI), which measures hemodynamic activity that is closely linked to neural activity, Positron Emission Tomography (PET), and Electroencephalography (EEG) is used.

Connectionist models serve as a test platform for different hypothesis of representation, information processing, and signal transmission. Lesioning studies in such models, e.g. artificial neural networks, where parts of the nodes are deliberately destroyed to see how the network performs, can also yield important insights in the working of several cell assemblies. Similarly, simulations of dysfunctional neurotransmitters in neurological conditions (e.g., dopamine in the basal ganglia of Parkinson's patients) can yield insights into the underlying mechanisms for patterns of cognitive deficits observed in the particular patient group. Predictions from these models can be tested in patients and/or via pharmacological manipulations, and these studies can in turn be used to inform the models, making the process recursive.

Recent updates to the site:

List of abbreviations:

- N

- This edit created a new page (also see list of new pages)

- m

- This is a minor edit

- b

- This edit was performed by a bot

- (±123)

- The page size changed by this number of bytes

21 May 2026

|

|

21:46 | Hu:Publications 3 changes history +405 [Hugangqing (3×)] | |||

|

|

21:46 (cur | prev) +1 Hugangqing talk contribs | ||||

|

|

21:46 (cur | prev) +56 Hugangqing talk contribs | ||||

|

|

21:42 (cur | prev) +348 Hugangqing talk contribs | ||||

|

|

21:40 | Hu 2 changes history +195 [Hugangqing (2×)] | |||

|

|

21:40 (cur | prev) +105 Hugangqing talk contribs | ||||

|

|

03:26 (cur | prev) +90 Hugangqing talk contribs | ||||

19 May 2026

|

|

02:49 | Hu:Members 2 changes history +178 [Hugangqing (2×)] | |||

|

|

02:49 (cur | prev) −563 Hugangqing talk contribs | ||||

|

|

02:47 (cur | prev) +741 Hugangqing talk contribs | ||||

| 02:45 | Upload log Hugangqing talk contribs uploaded File:Naved.png (Naved) | ||||