User:Nuri Purswani/NetworkReconstruction/ProjectOverview/Classes

| Home | Project Overview | Algorithms | Results | Discussion | Software | References |

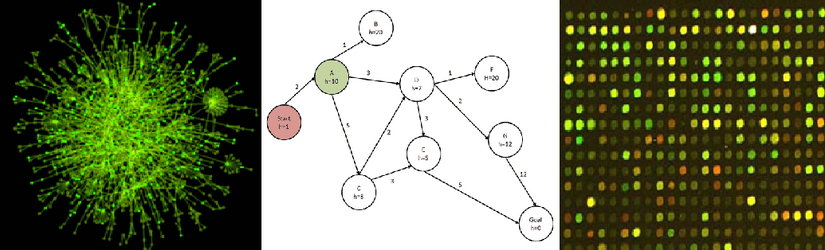

The task of biological network inference can be done at different scales [2]:

- Inference of gene-sequence interactions.

- Inference of gene-gene interactions.

The methods considered in this project focus on gene-gene interactions, although the implementations are defined in a more general fashion, suitable for solving any system identification problem. The four main types of existing network reconstruction algorithms, along with their assumptions and limitations are summarised below (provide references to all):

Clustering

The principle between this type of method is that genes that are co-expressed are likely to be functionally related. Quantification of "relatedness" between genes requires calculation employing some form of distance measure. Common distance measures include: eucledian distance, pearson correlation, spellman-rank...[3]. The main limitations to these methods include:

- Their inability to precisely infer gene-gene interactions: genes may be co-expressed, but clustering methods are unable to identify the precise interactions between genes.

- "There is no one size that fits all": Clustering methods impose a particular structure on the dataset of interest[3]. So out of the various published algorithms, there is no guarantee that they will return the correct structure of the datasets.

Bayesian Networks

Bayesian networks are graph based models that capture relations of conditional independence between variables[4]. Markovian assumptions apply to them [5 Ref Markov Assumption], which states that any given node in a directed acyclic graph is independent of its non-descendants, given its parents. This means that:

- Static bayesian networks (i.e. networks that infer biological relationships from steady state data) are limited in their usefulness to interpret biological information. One of the common characteristics of biological processes is the presence of feedback, which is not captured by this rule of conditional independence. (draw a diagram)

- Dynamic bayesian networks overcome this problem, by using time-series data as input.

- Advantage-they are able to cope with noisy data and stochasticity

Bayesian inference algorithms commonly utilise optimisation procedures such as simulated annealing, expectation maximization algorithm, etc, to maximise a score of observing a particular data structure given a set of parameters. Following from Bayes' rule:

Where [math]\displaystyle{ P(G|D) }[/math] is the score we are trying to maximise for a parameter [math]\displaystyle{ G }[/math] given the observed data [math]\displaystyle{ D }[/math].

Scoring schemes also include steps that penalize connections such as the bayesian information criterion, aic and dirichlet[3]. These will be re-visited again in the next section.(expand on bayesian networks)

Information Theory

Information theoretic approaches use the mutual information measure (see equation below- NUMBER EQUATIONS!) to quantify dependences between a pair of genes i and j. Depending on the value of the threshold set, the edges of the dependency are set to zero or 1. The general formula for mutual information is: [Also provide references to ARACNE and mut info algorithms]

where [math]\displaystyle{ H_i }[/math] is the entropy of a given quantity:

Ordinary Differential Equations

These involve inference of parameters [math]\displaystyle{ A, B, C, D }[/math] for models in the form:

where [math]\displaystyle{ x }[/math] and [math]\displaystyle{ y }[/math] are species in the biological network we are trying to infer.