Physics307L:People/Smith/Notebook

Lab Summaries

- See lab manual for what this lab was about.

- See my Lab Notebook entry for my remarks and recorded data.

Data

Reported value for fall time using AC coupling:

- The Digital Storage Oscilloscope (DSO) has a built in function to measure "fall-time". It reported 239.6ms to 241.0ms. This was believed to be incorrect.

- Using the cursors in the DSO interface to measure the time between the peak value and 10% of that value and using a frequency of between 2.343Hz and 2.430Hz reports a value of 58.00 ms.

- Subsequent measurements using this method after changing the frequency on the frequency generator:

- Frequency: 4.798Hz - 5.061Hz, fall time: 57.00ms.

- Frequency: 7.257Hz - 7.262Hz, fall time: 55.00ms.

- Subsequent measurements using this method after changing the frequency on the frequency generator:

Note: The DSO displays these times in integer values of milliseconds. Therefore, the precision of these measurements is necessarily limited. I suspect the way the DSO rounds these numbers makes the screen display, for instance, 55.00ms for any measurement between 54.5ms and 55.5ms.

What did you learn?

I had little previous experience with oscilloscopes before this lab. I was able to familiarize myself with the the way oscilloscopes are hooked up, how they used to work (scanning CRT displays, etc), and to interact with the modern DSO we used (it has an interface similar to an ATM machine, with many useful functions able to be selected and displayed using the buttons on its face.) I also learned a bit about the concept of AC coupling, which I had never heard of before. I also wrote my first lab notebook entry in the wiki format, which was very straightforward.

What could make the lab better next year?

Well, I saw a video online of someone who interfaced an oscilloscope with a soundcard on his PC and was able to do very, very impressive things; he wrote things on the screen, had very neat patterns and effects. I don't think we should do all that, but it's an interesting concept, and it might be worth explaining how someone would be able to do all that. You can see the video here.

Purpose

The objective of this lab is to experimentally determine the value of the Rydberg Constant by measuring the atomic emission spectral wavelengths of hydrogen and the hydrogen-like element deuterium (which is hydrogen with an extra neutron thrown in).

Data

See the data collected section of my lab notebook entry.

I used Excel to analyze the data (you can see my Excel spreadsheet here). I used our measurements of the wavelengths of the atomic emission spectra of hydrogen and of deuterium to calculate the measured Rydberg Constant (they are, believe it or not, slightly different despite the name "Rydberg Constant"). I also calculate the expected Rydberg Constant for hydrogen and deuterium by using fundamental physical constants, and compared these with our measured values. They are reported below:

| Expected Rydberg Constants | Avg. Measured Rydberg Constants | Percent Difference | ||

|---|---|---|---|---|

| Hydrogen | Clockwise | 1.09677286888108E+07 [math]\displaystyle{ m^{-1} }[/math] | 1.09797802016246E+07 [math]\displaystyle{ m^{-1} }[/math] | -0.1099% |

| Counterclockwise | 1.09677286888108E+07 [math]\displaystyle{ m^{-1} }[/math] | 1.09583397614656E+07 [math]\displaystyle{ m^{-1} }[/math] | 0.0856% | |

| Deuterium | Clockwise | 1.09647449477397E+07 [math]\displaystyle{ m^{-1} }[/math] | 1.09846377155094E+07 [math]\displaystyle{ m^{-1} }[/math] | -0.1814% |

| Counterclockwise | 1.09647449477397E+07 [math]\displaystyle{ m^{-1} }[/math] | 1.09636035647508E+07 [math]\displaystyle{ m^{-1} }[/math] | 0.0104% |

And our best estimates of our measured Rydberg Constants:

| Measured Rydberg Constants (in m-1) | ||

|---|---|---|

| Hydrogen | Clockwise | 10979780.2016246 [math]\displaystyle{ \pm }[/math] 2810.24625 |

| Counterclockwise | 10958339.7614656 [math]\displaystyle{ \pm }[/math] 3908.3275 | |

| Deuterium | Clockwise | 10984637.7155094 [math]\displaystyle{ \pm }[/math] 6215.96625 |

| Counterclockwise | 10963603.5647508 [math]\displaystyle{ \pm }[/math] 3312.62625 |

Conclusions and Remarks

I think that there was very little systematic error in this experiment, other than the uncertainty of the direction in which the instrument was calibrated (and we tried to get around that by taking measurements by repeating measurements by turning the knob one way and then the other). There was obviously some random error when measuring the wavelengths of the atomic spectra, since we didn't record the exact same number over and over. By taking five measurements for each atomic emission line, though, I think we were able to control the effects of random error on our final calculations. Because of this, we were able to measure one of the fundamental constants of atomic physics to a high degree of precision. Using an old, clunky spectrometer and getting results within 0.1% of the expected value of the Rydberg Constant for hydrogen-like elements! I think is really quite remarkable. I was very satisfied! I think it also shows the central limit theorem in action, which was kind of exciting.

I would probably do things slightly differently, were I to repeat this experiment. The lab manual neglected to give a procedure for calibrating the instrument, so we spent quite a while fiddling around with all the knobs. Once we finally figured out how to calibrate it, we neglected to record some very important information about the calibration procedure. The spectrometer has a fair amount of backlash and therefore is usually calibrated for taking measurements by turning the knob one way. Unfortunately, we forgot to remember which way the instrument was calibrated, so we just took twice as many measurements - some by turning the knob clockwise only, some by turning the knob counterclockwise only. It's not too hard to do that, but it takes more time.

Purpose

The purpose of this lab is to experimentally measure the value of Planck's Constant by measuring the stopping voltage of known wavelengths of light. A mercury emission lamp is used as the source of light. The light is diffracted by a diffraction grating and is focused using a lens onto a vacuum photodiode tube. The light knocks electrons off of a plate in the photodiode tube and they collect on another plate. The amassed electrons on the collector plate create an electric field which will prevent further electrons from reaching the anode (their kinetic energy won't be sufficient to overcome the potential barrier). The potential of the anode, called a stopping potential or stopping voltage, is measured using a digital multimeter. A unity gain op-amp (an op-amp is an operational amplifier) is used to ensure there is a very high resistance for terminals for a voltmeter, in order to prevent electrons from leaving the anode ("current leaking").

Data

The first part of the lab was designed to demonstrate that the stopping voltages are independent of the intensity of light striking the photodiode tube. It did demonstrate this, and we also found that it takes longer for the photodiode tube to build up enough charge to reach the stopping potential for lower intensities of light. These data can be seen here.

The objective of the second part of the lab was to find the ratio of Planck's constant over the charge of the electron by looking at the slope of a linear regression of the stopping voltages vs. frequencies. I had some trouble figuring out how to estimate the error of these data, but ended up with some acceptable answer, I think. You can see the data here.

- My best estimate of Planck's constant based on the data we took was 7.14817E-34 +2.03352E-36/-4.06703E-36.

- (To get this estimate, I took the average of the 1st and 2nd order maxima stopping potentials. The error estimate was found by doing a linear regression on the largest and smallest values of the atomic emission spectral lines' stopping potentials. I don't know whether that was a good way to do it, and it looks a little weird since my error estimate is not symmetric; the deviation to the lower end of the estimate is larger than that to the high end - in fact, it seems to be exactly twice as large! This is rather odd.)

Conclusions and Remarks

I didn't particularly enjoy the lab manual's explanation of photodiode tubes and such. It left some questions in my mind; what was the unity gain op-amp used for? Why did the h/e apparatus have a power supply? I also think if I were to do this lab over, I would take more measurements of the first order maxima stopping voltages. I wasn't very satisfied with my best estimate of the Planck's constant, and I believe that more data points would help. I also think that there is some rather astounding systematic error sources somewhere; the relative error of my best estimate is quite low, but my mean estimate for it is almost 10% off of the accepted value. Perhaps if the lab had been completely dark, it would have produced slightly more accurate results, I'm not sure. I think the digital multimeter may have been slightly broken, as well, since it displayed 0.04 V when the h/e apparatus was shorted out (it should have shown 0.00 V). Or, maybe the unity gain op-amp wasn't entirely precise; I think that the ratio of resistances on op-amp inputs is supposed to decide the gain it has - maybe the resistances weren't precisely the same, but within some tolerance acceptable to the manufacturer.

SJK 00:48, 25 October 2007 (CDT)

Really great job on this lab! You accepted the challenge of squeezing the best data possible out of less than perfect equipment, and got great results! Very nice job with the data and error analysis.

Purpose

We set out to measure the speed of light using a stopwatch and running really fast. It didn't work too well, so we figured we might revert to the lab manual.SJK 00:30, 25 October 2007 (CDT)

Hey! So you know where the stopwatch is? Anyway, sorry about that today. I spoke with Bill Miller, and he said he's going to look for some this weekend, so hopefully we will be all set next week.

It turns out that the writeup in the lab manual is adequate, if somewhat dense.

In order to measure the speed of light, we didn't build a huge rotating mirror assembly like Michelson did (his work, by the way, is very impressive. His early attempts to measured c produced 299,910±50 km/s - and this was way back in 1879! By 1922, he was able to tweak his methodology to produce 299,796±4 km/s. That's remarkable!) Instead, we used an electronic setup. In our setup, an LED sends a pulse of light to a photomultiplier tube. A device called a Time Amplitude Converter (TAC) is triggered once by a circuit which discharges a capacitor to blink the LED and is triggered once again by the PMT registering a drop in voltage caused by the incident photons. The TAC produces a voltage proportional to the time delay between triggering events. By using an oscilloscope we can measure the voltage at the peak of the pulse the TAC produces. By varying the distance the light has to travel and recording the resulting TAC voltages, we can find the speed of light. Converting the voltages to time (which is simple; just multiply by 5*10-9), plotting the data, time vs. distance, and finding the slope of the best fit line should produce a reasonable estimate for the speed of light.

Data

The slope of these data was found to be [math]\displaystyle{ 3.063 \cdot 10^8 }[/math], with a standard error of [math]\displaystyle{ 1.83 \cdot 10^7 }[/math], making the best estimate of the speed of light [math]\displaystyle{ (3.063 \pm 0.18) \cdot 10^8 \frac{m}{s} }[/math].

This is a relative error of 5.96%, and the value 3.063E8 m/s is 2.17% different from the accepted value of the speed of light, [math]\displaystyle{ 2.9979 \cdot 10^8 \frac{m}{s} }[/math] (according to Wikipedia).

Conclusions and Remarks

The use of a multichannel analyzer, as suggested by Tomas Mondragon, might smooth things up a bit.

Also, there appears to be an outlier in the plot of our data; the time measured for a distance of 140 cm into the tube was less than the time measured for a distance of 130 cm into the tube. I don't know what went wrong here, but it's worth noting. The TAC must have a delay of at least 2 nanoseconds between triggering events; perhaps by positioning the LED module 140 cm into the tube we made the "halt" event trigger before the "start" event (as the wires are of unequal length; the wire between the LED module and TAC is much, much longer than the wire between the PMT and TAC). We were supposed to use a time delay mechanism to add a time delay to the "halt" trigger, but we found it to be unnecessary for longer distances between the LED and PMT.

Since the readings on the DSO were unstable, with the minimum voltage of the PMT fluctuating significantly, I'm pretty happy with these results.

Purpose

Using PASCO Scientific's Millikan Oil Drop Apparatus (Model AP-8210), experimentally determine the value of the elementary charge and confirm the quantization of charge.

Please also see PASCO's Lab Manual

By spraying mineral oil of known density into the apparatus with an atomizer and measuring the time it takes a droplet to fall one major reticle line on the viewing scope (which is 0.5 mm), we can extrapolate the radius (and therefore volume and mass) of the droplet using Stoke's Law (which describes the behavior of spherical objects in a fluid). By applying voltage to the two plates above and below the viewing chamber, we subject the droplet to an electric field. If there is a net charge on our droplet, the electric field will exert a force on the droplet and make it rise or fall, depending on the polarity. In the spirit of Geordi LaForge, we might have to reverse the polarity (and that obviously fixes any problem which might arise on the Enterprise) in order for the droplet to rise. Measuring the time it takes the droplet to rise one major reticle line and doing some algebra will approximate the net charge of the droplet.

Data and Analysis

SJK 00:03, 19 November 2007 (CST)

Awesome work and neat data analysis! Quick comment here: while grading Linh's lab, I learned that "barometric pressure" means "corrected to sea" level, whereas you are interested in "absolute pressure." From your Excel sheet, it appeared that you didn't use the actual Albuquerque absolute pressure, but rather the corrected to sea level value? This would affect your answer, of course.

Please also see My Excel worksheet for more in depth examination of my calculations.

My lab partner (Kyle Martin) and I were able to record the fall times and rise times for 18 oil droplets. We were able to measure these times more than once on 11 of these droplets. Following the equations laid out in the PASCO lab manual, we were able to determine the charges of these 18 droplets. It's reasonable to assume that the charge on any given droplet is constant, and therefore we averaged the calculated charges for those droplets we were able to measure several times. Looking at the calculated charges, it seems that the charges are grouped. Taking the mean of the smallest group to be the elementary charge (which seems reasonable to me, though we took data from only 18 droplets and therefore could be missing charge of smaller magnitude; I mean, we haven't taken nearly enough data to determine for certain that the smallest charge that we see is in fact the elementary charge. If we were to get serious about this, it might be necessary to take a quintillion measurements to rule out fractional charge.)

SJK 00:05, 19 November 2007 (CST)

Again really great job with this lab! I like the linear fit method, and the fact that your slope matches your "n=1" value is a very good indication that you guessed correctly for the minimum charge unit. I wonder how bad it would look if you guess incorrectly? (such as starting with n=2). Also, see my other note about air pressure. Great work!

Anyway, it's assumed that the mean of the first group is the elementary charge and each subsequent group is an integer multiple of the elementary charge. While this isn't a rigorous proof of the quantization of charge, Millikan had the notion of quantized charge before commencing his measurements also - see J.J. Thompson's "corpuscles" of charge.

| Average Charge Per Drop | Group |

|---|---|

| 1.170284691 | Group 1 |

| 1.261815586 | Group 1 |

| 1.326204984 | Group 1 |

| 1.357593958 | Group 1 |

| 1.384181124 | Group 1 |

| 1.495305796 | Group 1 |

| 1.499256751 | Group 1 |

| 1.579841893 | Group 1 |

| 1.705486127 | Group 1 |

| 2.834670357 | Group 2 |

| 3.377621558 | Group 2 |

| 3.379771496 | Group 2 |

| 3.788927915 | Group 2 |

| 3.858074883 | Group 2 |

| 4.273635645 | Group 3 |

| 5.132206708 | Group 3 |

| 7.765736898 | Group 4 |

| 9.667025415 | Group 5 |

| Average (x10^-19 C) | Suspected Multiple of q | |

|---|---|---|

| Group 1 | 1.419996768 | 1 |

| Group 2 | 3.447813242 | 2 |

| Group 3 | 4.702921176 | 3 |

| Group 4 | 7.765736898 | 5 |

| Group 5 | 9.667025415 | 7 |

Conclusions and Remarks

When I sat down to write this lab up, I was at a loss as to how to analyze this data. After reading the notebook of my lab partner, Kyle Martin (i.e., all of the credit for this idea should go to him! It was his idea! See his graph!), I tried graphing the calculated charges vs. their suspected integer multiple of e-. There appears to be a linear relationship (See Figure 1). Using Excel to determine the slope of the line that fits this data (using the least squares method) and its uncertainty produces my best estimate of the value of the elementary charge:

[math]\displaystyle{ (1.50 \pm 0.039)\times 10^{-19}\;C }[/math]

This value is 6.1% less than the accepted value of [math]\displaystyle{ 1.602\times 10^{-19}\;C }[/math].

Possible sources of error include Brownian motion (as the droplets of oil are very small), random errors associated with timing (delay between the droplet crossing the major reticle and the stopwatch button being pressed, etc.), losing track of the oil droplet through the viewing scope, uncertainties of the electric field, uncertainties of the temperature (unsteady resistance readings of the thermistor, or problems interpreting the resistance as a temperature), inaccuracies in the determination of the viscosity of air (the table is for dry air, what will the effect of humidity be?), uncertainties of the barometric pressure, inaccuracies of Stokes' Law (or the assumptions he made to derive the equation) and uncertainties of the density of our mineral oil.

Were I to repeat this experiment, I would have tried very hard to find a microscope camera or to use a projector of some sort to observe the viewing chamber, as looking through the viewing scope for extended periods of time is very wearisome. I would also want to take many more measurements of the fall times and rise times, as 18 droplets are inadequate for determining the minimum quantum of charge with any certainty. The apparatus also has a radiation source which is designed to knock off electrons from the droplets, allowing subsequent measurements of the same droplet to be examining less net charge. We weren't able to use this feature of the apparatus, as we had trouble following a single droplet for many up-and-down cycles.

Also, reading the PASCO lab manual, I found that Millikan used a small cell of water between the lamp and viewing chamber to absorb the heat produced by the lamp and effectively thermally isolate the viewing chamber from the lamp. Our apparatus didn't have this feature, and it's likely that as our experiment progressed the temperature of our viewing chamber increased. My lab partner and I didn't record the resistance of the thermistor for each trial we ran, as we perhaps should have.

Purpose

Using Electron Spin Resonance (or ESR, also known as electron paramagnetic resonance), we will experimentally determine the g-factor of the electron by looking at the spin-flip transition of a free electron in a magnetic field.

Methods

We used a kit manufactured by the German company Leybold Didactic GmbH in our experiment. We placed a sample of Diphenyl-Picryl-Hydrazyl (or DPPH, which has a total angular momentum of zero and one unpaired electron, and therefore has only one resonant frequency for a given magnetic field) in a coil. The coil is placed in a uniform magnetic field and inserted in a probe unit which produces an RF signal. A pair of Helmholtz coils connected to a DC power supply produce the magnetic field, and an alternating current is also run through the Helmholtz coils in order to vary the magnetic field slightly. The oscillating magnetic field removes the need to know the frequency of the RF signal precisely, which is somewhat difficult. Because the sample is placed in a magnetic field, the energy degeneracy between the electron spin-up and spin-down states has been lifted.

Resonance occurs when the energy of the RF signal, tuned to a frequency [math]\displaystyle{ \nu }[/math], matches the energy difference between electron spin-up and spin-down states, which is the product [math]\displaystyle{ g_s \mu_B B }[/math] where [math]\displaystyle{ g_s }[/math] is the g-factor we are trying to find, [math]\displaystyle{ \mu_B }[/math] is the Bohr magneton and B is the strength of the magnetic field the sample is placed in. (This means [math]\displaystyle{ h \nu = g_s \mu_B B }[/math]). Electrons in the lower energy state can absorb a photon, causing them to jump to the higher state. This absorption will change the permeability of the test sample, which will change the inductance of the coil it is wrapped in, which will in turn change the oscillations of the RF signal.

In practice, we used a dual-channel oscilloscope to compare the voltage across the Helmholtz coils and the voltage across the RF oscillator. In order for resonance to occur, the voltage across the RF oscillator should drop twice per cycle of the sinusoidally varying magnetic field (and the voltage across the Helmholtz coils). This is happens when the voltage across the RF oscillator changes when the voltage across the Helmholtz coils passes through the voltage about which it oscillates. See Figure 2 for an illustration of what the oscilloscope traces should look like for resonance.

To determine the frequency of the RF oscillator, we used a frequency divider which takes megahertz frequencies and outputs kilohertz frequencies, which are more easily measured by our frequency counter. In order to determine the magnetic field necessary for the sample to be in resonance for a given frequency of the RF signal, we measure the current flowing through the Helmholtz coils and use the Biot-Savart law to calculate the field produced.

We took 27 measurements of the current through the Helmholtz coils and the frequency of the RF oscillator at resonance using three different coils. The different coils were used because they have different inductances and therefore will produce different frequency RF oscillations.

Data and Analysis

We took 27 measurements of the current through the Helmholtz coils and the frequency of the RF oscillator at resonance using three different coils. The measurements are below, in Tables 3, 4 and 5.

| Current (Amps) | Frequency (MHz) |

|---|---|

| 0.248 | 13.05 |

| 0.293 | 15.6 |

| 0.365 | 18.55 |

| 0.376 | 21.25 |

| 0.483 | 24.2 |

| 0.519 | 27.55 |

| 0.573 | 30 |

| Current (Amps) | Frequency (MHz) |

|---|---|

| 0.608 | 32.95 |

| 0.663 | 36.05 |

| 0.744 | 40.75 |

| 0.898 | 47.35 |

| 0.952 | 52.95 |

| 1.05 | 57.55 |

| 1.196 | 64.95 |

| 1.252 | 68.25 |

| 1.324 | 73.65 |

| Current (Amps) | Frequency (MHz) |

|---|---|

| 1.363 | 75.25 |

| 1.457 | 81.75 |

| 1.556 | 87 |

| 1.64 | 91.95 |

| 1.776 | 98.05 |

| 1.863 | 103.55 |

| 1.951 | 108.95 |

| 2.054 | 114.25 |

| 2.0905 | 116.95 |

| 2.217 | 123 |

| 2.29 | 126.95 |

Since the Helmholtz coils were wired in parallel and presumably have identical resistances, the identical voltage across them should produce identical currents. This means that the total current through the coils, which is recorded in the tables above, is twice the current through each coil. To determine the g-factor, I needed to calculate the magnetic field produced by the Helmholtz coils by the current through each coil. The manual gives an equation, [math]\displaystyle{ B = \mu_0 \left( \frac{4}{5} \right) ^{\frac{3}{2}} N \frac{I}{r} }[/math], where [math]\displaystyle{ \mu_0 = 1.256 \times 10^{-6} (v\cdot s/A \cdot m) }[/math] is the magnetic constant, N=320 is the number of turns in each coil, I is the current through each coil and r=6.75cm is the radius of the coils.

I then solved for the g-factor for each of our 27 measurements.

Conclusions

The average of the g-factor produced by solving the equation above is [math]\displaystyle{ \bar{g_s} = 1.8276 }[/math] with a standard error of [math]\displaystyle{ \frac{\sigma}{\sqrt{N}} = 0.01 }[/math], making my best estimate of the g-factor using the mean of the measurements [math]\displaystyle{ g_s = 1.8276 \pm 0.01 }[/math]. This is a relative uncertainty of 0.58%. The accepted value of the g-factor is 2.0023, which is 8.73% higher than the mean of my best estimate.

I also used a linear regression method to determine the g-factor based on my data. Since the relationship between frequency and magnetic field is linear ([math]\displaystyle{ \nu = \frac{g_s\times \mu_B}{h} B }[/math]), with the slope being [math]\displaystyle{ \frac{g_s \times \mu_B}{h} }[/math] and "y-intercept" of zero, this makes sense. A plot of my data, as shown in Figure 3, confirms this linear relationship. I used Excel to determine the slope and uncertainty of the line fitting my data using the least-squares method, resulting in my best estimate of the g-factor being [math]\displaystyle{ 1.86 \pm 0.005 }[/math] based on my data. This has a relative uncertainty of 0.28%, and is 7.27% lower than the accepted value of the g-factor.

Remarks

SJK 23:43, 18 November 2007 (CST)

Wow, your data look awesome! Your figure looks great, and obviously very carefully taken data that look very linear. I agree with your remarks here, and I think the fact that the data look so linear and produce such a good fit gives strong clues to the possible sources of systematic error. It would seem that either your RF freq. measurement is biased by a fixed percentage, or your magnetic field is off by a fixed percentage. As you mention, either one of these is possible, though one would hope that the RF frequency divider was manufactured with better than 10% accuracy. So, the magnetic field would be a likely candidate. Unfortunately, we don't have a Gauss meter, though we should try to get one for next year. I'm not sure how much a single-axis hall probe would cost, but we do have an almost-homemade one that you could try to get working. Excellent work on this lab!

Also another thought: the RF coil needs to be aligned perpendicular to the field. That, combined with misaligned coils could be pretty significant.

I was excited about this lab, since being able to detect quantum phenomenon is fun. I was a little bit disappointed that my result for the g-factor was almost 10% different than the accepted value. The method used to measure the resonant frequency and current going through the Helmholtz coils was kludgy, however. Eyeballing an oscilloscope and tweaking knobs until two traces appear to cross zero at the same time is, it seems to me, inherently imprecise. I'm not very familiar with other electronic instrumentation, so I'm not sure if there is a better way to have done this - but it sure seems like there should be. I also wondered if the Earth's magnetic field would affect this experiment, but it seems that the strength of that field is around 0.3 Gauss (though this varies widely, and might be significantly different in the lab) which is much smaller than the magnetic field we put our sample in. Also, I didn't pay attention to which way we had aligned our Helmholtz coils in relation to the magnetic poles of Earth, as I figured it didn't matter.

I also noticed during the experiment that in using the phase shifter, turning the knob (which is connected to the variable resistor) had the effect of not only changing the phase of the ESR probe signal in relation to the voltage to the Helmholtz coils (displayed on the oscilloscope) but also of changing the amplitude of the voltage to the Helmholtz coils. Thinking back, the effect wasn't large but it was noticeable. I didn't check the multimeter to see if the current going through it changed as the amplitude of the voltage readings on the oscilloscope changed - but thinking about it, I certainly should have. I sure hope they changed together, as I can't think of any reason why they shouldn't have, although I haven't taken junior E&M ye - that comes next semester. I'm sure we will deal with things like our phase shifter (or RC circuits being used in alternating currents).

Also, part of the ESR adapter divided the frequency from megahertz to kilohertz (which are more easily counted by the cheap electronic equipment that is ubiquitous in undergraduate laboratories). I don't know exactly how this frequency divider was designed, but if it were poorly designed it certainly could have been a source of error. I would hope that this was a precise process, but it may not have been.

Another source of error may have been the alignment of the Helmholtz coils. The calculations I did for the magnetic field produced by a current through these coils doesn't take into account their alignment; if they weren't parallel or were too close together or too far apart, the magnetic field would probably be slightly different.

Purpose

The lab manual we have been using for this class, which is last year's lab manual written by Dr. Gold, has a very sparse section for the Poisson Statistics lab. Kyle and I referred to it for the basic premise of the measurements we took and I referred to it for some basic ideas for data analysis.

The overall goal of this lab is familiarization with multichannel analyzers and Poissonian data. Reading through my colleague's notebooks, I came up with some important questions I wanted to answer in the course of this lab. Specifically,

- Are the random, independent events of muons striking a scintillation detector in our laboratory described accurately by a Poisson distribution?

- Is the standard deviation of the number of events we measured described accurately by the standard deviation of a Poisson distribution?

- Does the goodness-of-fit of the Poisson distribution change with the anticipated number of events?

- Does a Gaussian distribution accurately represent random, independent events?

- Does the goodness-of-fit of the Gaussian distribution change with the anticipated number of events?

Methods

We used a multichannel analyzer and a scintillation detector to record the event of muons striking a scintillating material in our lab. I used MATLAB to examine the distribution of these events. For a summary of what I did in MATLAB, take a look at that section in my notebook entry.

Data

For a good look at what we measured, take a look at my figures and tables.

Conclusions and Remarks

- Are the random, independent events of muons striking a scintillation detector in our laboratory described accurately by a Poisson distribution?

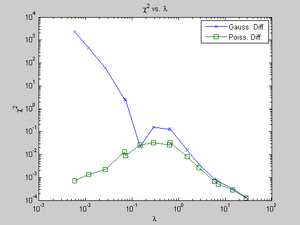

- While difficult to answer conclusively, I believe the answer to this question is yes. As evidence, I present the Chi-Square goodness-of-fit of the Poisson PMD for my data. From what I've read, if this number is less than 1 or so, the fit is good. Since my Chi-Square ranges from 0.0001 to 0.032, I believe my fit is excellent.

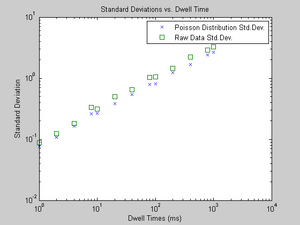

- Is the standard deviation of the number of events we measured described accurately by the standard deviation of a Poisson distribution?

- Qualitatively, the answer is sort of. In Figure 5, I demonstrate that the standard deviation of my data is always a bit larger than the standard deviation of a Poisson distribution (the square root of lambda). I don't know why this is; systematic error is a probable culprit, although I'm unsure what exactly would cause this. Somehow, I think the events that the MCA counted were not completely random and independent, or maybe the discriminator didn't pass along many events it should have, or something else happened.

- Does the goodness-of-fit of the Poisson distribution change with the anticipated number of events?

- Yes. As lambda increases, as seen in Figure 4, the goodness-of-fit improves. This is a relatively small amount compared to the Gaussian distribution's goodness-of-fit, though.

- Does a Gaussian distribution accurately represent random, independent events? And does the goodness-of-fit of the Gaussian distribution change with the anticipated number of events?

- If the number of anticipated events you are examining is large enough, I believe the Gaussian distribution accurately represents what you see. As Figure 4 demonstrates, the goodness-of-fit drastically changes as lambda changes.

I was wholly unfamiliar with the Poisson distribution before this lab, and became fairly comfortable with it by the end. I also got a bit more comfortable with the Gaussian distribution and using it.

I did struggle with Matlab at first, but I think I got a handle on it. And I got some nice figures out of Matlab, with a bit of work, that I'm pleased with.

Formal Report

I did my formal report on the Speed of Light lab. I chose to typeset it in LaTeX, and it output a very pretty PDF which you should definitely read.

Koch comments on rough draft

Jesse, Here are my comments on your rough draft. I'll send an email with grade estimate. Additional:

- Calibration! Add a discussion as to how the TAC was calibrated (and also do this if you did not previously). How do we know scope is working properly, etc.

Links to Lab Entries

| My Wednesday Labs | ||||

|---|---|---|---|---|