Physics307L:People/Josey/Rough Draft

Measuring and Predicting Background Radiation Using Poisson Statistics

SJK 12:41, 28 November 2010 (EST)

Good title, author, contact info

Experimentalists: Brian P Josey and Kirstin G G Harriger

Junior Lab, Department of Physics & Astronomy, University of New Mexico

Albuquerque, NM 87131

bjosey@unm.eduAbstract

SJK 12:53, 28 November 2010 (EST)

I like your abstract. Just a few grammatical and / or typing errors. After any additional things you do during "extra data/analysis week" you will probably add a sentence or two.

In this paper, the results of the measurements of the background radiation were measured with differing time intervals over which the measurements took place. The fact that this data followed the Poisson distribution was demonstrated. After establishing the Poisson distribution, the results of the data were then used to predict the average rate of radiation per second. This estimated value was found to be 30 ± 4 radiations per second. A new set of data was generated from a run representing the rate for radiation per second. This data indicated that the average rate in the laboratory to be 30 ± 5 radiations per second, indicating that the technique is useful to model and measure background radiation.

Introduction

SJK 13:00, 28 November 2010 (EST)

I also like your introduction. In here you're going to have to figure out how to cite a few peer-reviewed publications, just because that's part of our exercise. One suggestion is to find research papers that use Poisson statistics to analyze data, such as for example the division of cells you mention. Maybe in reference 2 you can find those citations. Also, you should talk about what the cosmic radiation is (muons) and where it comes from--lots of interesting stuff there. Also, are muons the only thing you're detecting? Probably not. What other sources? Enough potassium in your body? Other things? I don't know the answer off the top of my head. Another thing you could add is whether a time dependent probability per unit time is still governed by Poisson statistics.

The Poisson distribution, first described by Siméon Denis Poisson in 1838 1, is used to describe events that occur in discrete numbers at random and independent intervals, but at a definitive average rate. Examples of this are radiation from nuclei over a range of time, number of births in a maternity ward over time, or divisions of cells in a culture 2. Mathematically, the Poisson distribution is given as:

[math]\displaystyle{ P_{\mu} (\nu) = e^{-\mu} \frac {\mu ^ {\nu}} {\nu !} }[/math]

where,

- Pμ (ν) -the probability of ν counts in a definitive interval,

- μ - mean number of counts in the time interval,

This distribution has the unique property that the square root of the average is also the standard deviation 2.

Here the Poisson distribution was demonstrated by measuring the background radiation in a standard physics teaching laboratory at 5000 ft elevation. Several sets of data were created by varying the size of the time interval of the radiation, referred to as the "window size." After taking several different sets of data with the window size ranging from 10 ms to 800 ms, the average rate per second was calculated, and then compared to data taken from a set of data of 1 second window size.

Methods and Materials

SJK 13:12, 28 November 2010 (EST)

There are a couple things missing from Methods. The main thing I notice are your software settings. If the software allows for exporting the settings to a file, you should upload it and link to it. Or maybe the settings are recorded at the top of the data files? I do remember many lines of header in those files. I'm going to suggest below that you link to the raw data files somehow. Also, you leave off the brand name on some items. A common way to do this is to say, Scintillator model ___ (CompanyName, city).

For this experiment, a combined scintillator-photomultiplier tube (scintillator-PMT), Figure 1 was used to collect data3. To do this, every time the scintillator detected radiation, it would fire a beam of ultraviolet light to the PMT. The PMT would then convert this light signal into a single voltage SJK 13:06, 28 November 2010 (EST)

do you mean voltage pulse?

. This voltage would be carried to an internal MCS card in a computer, where it would be analyzed by a UCS 30 software. This software counts each signal voltage and create a set of data containing the size of the window over which the data was collected, the time and the number of radiation events to occur in that window. In order for the scintillator to detect the radiation, it had to have a potential gradient that would pick up ions created in the radiation event. This potential was supplied by a Spectech Universal Computer Spectrometer power supply, Figure 2, and set to 1200 V throughout the course of the experiment.

The collection of the data was carried out by using the UCS 30 software. It would create a consecutive series of windows of set interval of time, and count the number of signal currents, which represent the number of radiation events, that occurred within each window. This data was then saved into data file that could be manipulated and processed using MATLAB v. 2009a. To demonstrate the Poisson distribution, the scintillator-PMT, power supply, and computer were all turned on. The UCS 30 software was then uploaded, and the window size was set to various lengths of time. Data was collected over a series of sets of windows, the window sizes were set at 10, 20, 40, 80, 100, 200, 400 and then 800 ms. Each run contained 2064 windows of the given size. The data was then analyzed, see results and discussion below, to demonstrate that background radiation did follow a Poisson distribution. This data was then used to predict the behavior of a similar set of data that occurred for a 1 second window size. After predicting its behavior, the system was ran again, using a 2064 windows of 1 second length. This data was then compared to the predicted results form the initial data.

SJK 13:09, 28 November 2010 (EST)

Often, Analysis methods are an important part of the methods section. But I agree with you moving it to the results. However, I don't see your Matlab code anywhere. You should put it on a page somewhere and link to it. You could link to it in an analysis section of this methods section.

Results

SJK 13:51, 28 November 2010 (EST)

You should link to your raw data (the output files from the software). Hopefully there's an easy way to do this (such as using a public folder). If not, then it may not take to long just to link the hyperlinks (you don't have to embed them all, for sure don't do that).

From the scintillator-PMT and the UCS 30 software, the data was processed using Google Docs to determine the range of the number of radiation events per window, and the number of windows with the given number of radiation events. A fractional distribution of the data was also determined. This data is summarized in Table 1 below:

- Table 1 This table summarizes the raw data from each of the initial experimental runs. For each window size, the whole range of number of radiation events per window is given in the first column. In the second column, the number of windows that had this given number of radiation events is given. For example, for the 10 ms run, only a single window had four radiation events in it. The third and final column gives the fractional probability, out of 1, of such event happening in the given run.

SJK 13:53, 28 November 2010 (EST)

Nice tables and captions

SJK 13:56, 28 November 2010 (EST)

For Figure 3, "window" is confusing terminology because it is redundant with the time window. I think "events" is better terminology than "radiations." Can you overlay the frequencies for the best fit Poisson? Other than that, good figure.

From this data, a graph was generated to illustrate the characteristics of the data. This graph is given in Figure 3. For the sake of simplicity only four of the nine data runs are graphed, however, even from this small sample of data, the trends across the whole of the sample are still clear. While the exact implications of this data is discussed in the discussion section below, the data makes it clear that as the window sizes increases, the most probable number of radiations per window increases, and the distribution of the probabilities spreads. This spreading in the data is a result of a greater standard error. Together these two trends are qualitative reasons to believe that the data follows a Poisson distribution. This is further discussed bellow in the discussion section4.

A more potent argument for the Poisson distribution is to determine the averages and standard deviations for each set of data. This data is summarized in Table 2 below:

- Table 2 This table summarizes the raw data collected from the runs with varying window sizes, from 10 ms to 800 ms. The first column represents the average radiation event per window as calculated directly from the data. The second and third rows represent the standard deviation of the data as the square root of the average, and as directly calculated from the data. The fourth row represents the percent error of the standard deviation from the data from the square root standard deviation. Because of the very low difference, it is clear that the data follows a Poisson distribution. The last two rows are the average and standard deviation converted from window size to rate per second.

As this table illustrates, the average radiation event per window sizes grows as the window sizes increases, and the standard deviation in proportion to the average also increases. There are two standard deviations in the table. The first is the square root of the average, while the second is directly calculated from the data. The reason for this, discussed in greater detail below, is that the standard deviation of a Poisson distribution is identical to the square root of the average. As the percent error between the two values shows, the data does follow this trend very closely, and the small differences, that never exceed 1.5 %, indicate that this is true.

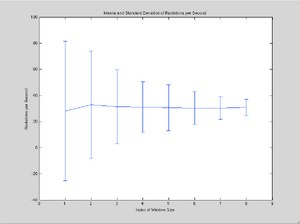

The averages and standard deviations from the data were then divided by the window size to give the rate and error as number of radiations per second. These values are then graphed in Figure 4. Clearly the standard deviation decreases as the window size approaches 1 second, indicating the greater accuracy in the data. From this data, the average rate and standard deviation for a windows size of 1 second was calculated. This value, xwav, was calculated using weighted averages2:

[math]\displaystyle{ x_{wav} = \frac {\sum {w_i x_i}} {\sum {w_i}} }[/math]

where

- xwav - is the best estimate for the average number of radiations per second,

- wi - is the weight of each average, this value is just the inverse of the square of the standard error for a given run,

- xi - is the average value from each run

The standard deviation is then given as:

[math]\displaystyle{ \sigma_{wav} = \frac {1} {\sqrt {\sum {w_i}}} }[/math]

where again, wi is the weight of each average, and σwav is the best estimate for the standard deviation in the final average. These calculations then give a predicted value of 30 ± 4 radiations per second. Another experiment was ran, this time the window size was raised to 1 second. The data from this experiment is summarized in Table 3 below. It is important to note that the predicted value for the average number of radiations per second is only off by the measured value by 0.725 %.SJK 14:07, 28 November 2010 (EST)

I like that you implemented a weighted average. However, I'm not sure whether it's the best way to do what you're doing? An alternative would be to just use the entire expected number that you get from summing up all the counts in a given run and then dividing by (NumWindows*WindowWidth). The uncertainty in this would then be (sqrt(NumCounts)/(NumWindows*WindowWidth)) and would surely be lower than what you show. You would still have more certainty on larger window widths, but your overall uncertainty (and uncertainty on each point) would be sqrt(2048)=~45 times less. You can tell by looking at your figure that your uncertainty estimates are way too big, so I think I'm right about it. It'd be informative, I think in your final draft to leave in this figure and then show the new one too.

- Table 3 This table summarizes the data taken for a window size of 1 second. On the left is the average predicted by the calculations, its square root, and predicted standard deviation. Below this is the actual average, its square root, standard deviation and the percent difference between the prediction and the measured amount. On the left is the range of radiations per minute, the raw number of windows with a given number and their fractional frequency.

Discussion

SJK 14:10, 28 November 2010 (EST)

I like your discussion. Very nice. I always have a difficult time splitting discussion from the results, but you succeeded.

Qualitatively, the Poisson distribution has a specific trend in its behavior, namely, as the window size over which the counts were taken increases, the average number of events per window increases, and the distribution spreads out over time4. This results in probability graphs moving to the right and flattening in a standard x-y graph as Figure 3 demonstrates. This behavior in the data indicates that a Poisson distribution could be the mostly likely way to model and represent the data. However, there is a much stronger argument for this conclusion.

The qualitative behavior in the Poisson distribution is actually the result of the change in the standard deviation from the mean4. As Table 2 illustrates, the square root of the mean is close enough to the experimental deviation. In fact, using the theory of large numbers, it can be shown that the standard deviation of a Poisson distribution and the square root of the mean are the same2. The data for the first several experimental runs illustrates that the standard deviation and square root of the mean never differ by more than 1.3 %, indicating a very close relationship. This relationship is so close that it is clear that the background radiation in the laboratory can be modeled by a Poisson distribution. Also, this means that as the mean number of radiations per window increases, the standard deviation increases proportional to the square root of the mean, giving the spreading behavior in Figure 3.

Armed with this knowledge of the behavior of the background radiation, the means and standard deviations for the experimental sets between 10 ms and 800 ms were used to determine the average rate of radiation per second. As discussed in the results section, this value was found by using the weighted average method to account for varying degrees of certainty in the measurements. This generated a value of 30 ± 4 radiations per second, and the experimental test for the one second time window gave a final result of 30 ± 6 radiations per minute. As summarized in Table 3 the difference between the two values was only 0.725 %. Because of this close relationship between the two values, it is clear that the method of applying the Poisson distribution to calculate the average radiation rate is successful. This can then be used for any other window size, where determining the final result is only a function of scale.

Conclusions

SJK 14:12, 28 November 2010 (EST)

Good conclusions, too

For this paper, the radiation rates were measured for varying time intervals to determine its behavior and find a mathematical representation of it. Because the behavior in the probability distributions followed a pattern where the mean grew as the interval increased and the experimental standard deviation grew as the square root of the mean, it became clear that the Poisson distribution is the best way to model the behavior of the background radiation. The difference between an ideal Poisson distribution and the data was found to never vary more than 1.3 % for all the data. Prompted by this, the average rate of radiation per second was calculated from the data. This gave an average rate of 30 ± 4 radiations per second. These values were checked experimentally, giving a value of 30 ± 6 radiations per second, showing that the model was acceptable and could create accurate predictions. The utility of this models is found for any process that, like background radiation, occurs at random independent times, but a definitive average rate. The methods presented here can be used to predict the behavior of these processes to gain very accurate results.

Acknowledgments

SJK 14:14, 28 November 2010 (EST)

Good acknowledgements

As always, I would like to thank the professor for this course, Dr. Steve Koch, the TA, Katie Richardson and my fellow experimenter, Kirstin Harriger. All of whom were very helpful in conducting the lab. I would like to also thank Dr. Michael Gold, the original professor of the course for his manual and work in initially producing this experiment and procedure.

References

SJK 12:39, 28 November 2010 (EST)

A challenge for you will be to find peer-reviewed articles to cite. Even if that Poisson article were peer-reviewed (probably not) you can't read French, can you? Anyway, my idea for you is to find research articles that use Poisson statistical tests to analyze systems. One example is that paramecium distribution article I sent you. Just a few of those that you can understand. You may also want to find a paper about a statistical test (such as the paper I sent you) that you can maybe easily try out.

- Poisson, Siméon Denis Recherches sur la probabilité des jugements en matière criminelle et en matière civile (1838) Link here (in French).

- Taylor, John R. An Introduction to Error Analysis: The Study of Uncertainties in Physical Measurements 2e (1997) pg. 174-5 for weighted average 245- 260 for Poisson, Amazon link

- Gold, Michael Physics 307L: Junior Lab Manual (2006) link here

- Wikipedia Poisson Distribution article form the web, external link

Koch Comments

Steve Koch 12:39, 28 November 2010 (EST): This was a page-turner, even on first read-through! Excellent first draft, and great approach to the open-ended lab. My main criticisms were: (1) incomplete methods, (2) I question the weighted mean method/pretty sure I'm right, and (3) lack of peer-reviewed citations. All very easy to fix. So, what to do for the "extra data/analysis" week? Well, first, try the correct to the weighted mean and make the new graph. Other than that, it's up to you. Can you determine whether you're really looking at mostly background radiation? Is it time dependent (time of day/longer term)? What do the energies of your pulses look like. If you make the MCS too sensitive, do you start triggering on electrical noise, and does it become non-Poissonian? Another idea would be to write Matlab code to simulate poisson data. I am think I may lecture about this tomorrow so you'd know how to do it. Then you could analyze the simulated data with your same methods and see how well it works for known poisson data. Finally, you could implement further methods, such as in the paper that I sent you. For that, you would need to know the arrival times for all the events, and I don't think the software can do that. But you could use simulated data. OK, thanks again for the excellent first draft!