Butlin:Unix for Bioinformatics - basic tutorial

Before you jump into this tutorial

These are a few points that you should know before you start this tutorial:

- Linux is Unix re-coded under an open-source licence, the same way as R is a re-coded version of S. Here, when I use the term Unix, I refer to all Unix-like computing environments, i. e. the original Unix that comes with Macs as well as most Linux flavours.

- This tutorial was originally designed to be run on the computer cluster of the University of Sheffield called Iceberg. However, if you cannot access iceberg, then access the compute cluster or desktop compute server at your own institute (assuming that they have some kind of Unix installed, of course). The section Iceberg - making first contact might still be helpful for that.

- Iceberg has scientific Linux and the job scheduling programme Sun Grid Engine installed. If you have an iceberg account, you will log into that account on Iceberg. If you don't have an iceberg account yet, either get one before you start this tutorial or begin this tutorial on any Linux/Unix machine, remotely or locally. All tasks of this and the advanced tutorial can be done on any Unix/Linux machine (with the only exception of the second part of the second to last task in the advanced part of this tutorial that will show you how you can exploit a computer cluster for your work).

- The section Downloading sequence data for this workshop contains two parts: one for users with iceberg access and a second for those without.

- This tutorial expects you to do all the steps exactly as written from top to bottom. So refrain from experimenting during the first run through it to avoid unnecessary frustration.

- This tutorial is designed to be a guided tour through the world of Unix for you as a biologist. However, it does not explain everything in full detail. Frankly, that would be too boring for me to write down! Instead, it frequently expects from you to make sense of the output from a command and thereby infer what this command just did. Now that you know this, I am sure you won't be easily stumped by anything in this tutorial.

- Your command line prompt will end with a $ sign. So a $ sign in this tutorial tells you to type the stuff that comes after the $ sign into your command line and then press

ENTER/RETURNin order to execute that command line.

- You will see error messages! I still see them everyday that I use the Unix command line. And at the beginning almost all of them are caused by small and sometimes not very obvious typo's. So when you see an error message, the first thing you should do is check for typo's. To do this, press the

Up-arrowkey on your keyboard which brings back the last command you executed and let's you edit it.

- The words "folder" and "directory" mean the same thing. So I use them interchangeably.

- Please refrain from just copy-pasting commands from this tutorial into your command line! I strongly believe that this would lead to a bad learning outcome. On the one hand, you need to practice typing commands. It may feel awkward at the beginning, but believe me you will soon get faster and faster at it. On the other hand, typing in those command lines forces you to look carefully and pay attention to detail. It should also help you remembering more of these commands in the future, although you can always come back to this tutorial to refresh your memory. Another reason for typing instead of copy-pasting is occasional unintended formatting of text of command lines in this tutorial (I am trying to weed that out). For instance, it appears that sometimes curly quotes - “ - have slipped into command lines in this tutorial instead of the straight quotes - " - that you should find on your keyboard. Unsurprisingly, this will lead to errors.

- I often see people going through this tutorial having part of their screen with the window of the Internet browser and another with the window of their terminal programme. They then use their mouse when they need to make the other window active. This is not optimal. You should have all your windows using the whole screen, i. e. the currently active window covering all other windows that are open. On Mac you can then use the Cmd + TAB key combination or on Linux the Ctrl + TAB key combination to easily switch to another window. Have a look at this little video. This is a much faster way to switch between windows and allows you to use your whole screen for each window that you have open.

- There are still small (hopefully not large) bugs lurking in this protocol. Please help to improve it by correcting those bugs or adding new tasks, more or better explanations and writing comments on the discussion page. Joining OpenWetWare is easy and allows you to edit pages or create new ones just like on Wikipedia.

Iceberg - making first contact

from Windows

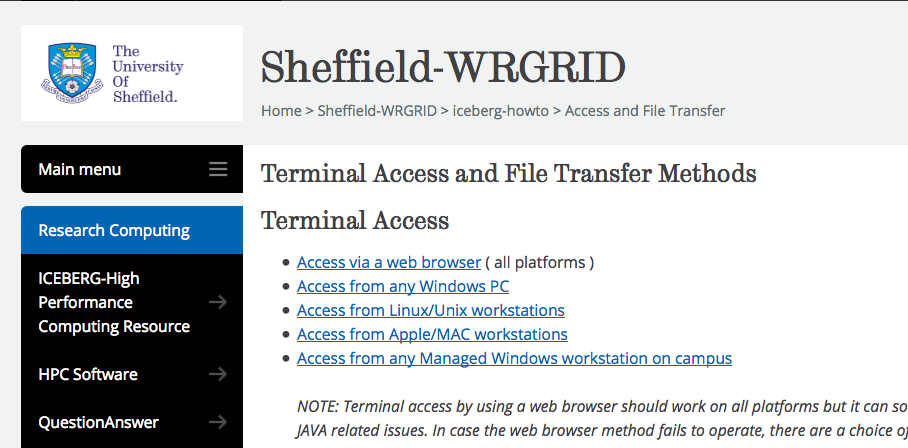

Open this website in a new browser tab. https://www.sheffield.ac.uk/wrgrid/using/access

On the new page, follow the link Access via a web browser. You should then see the following:

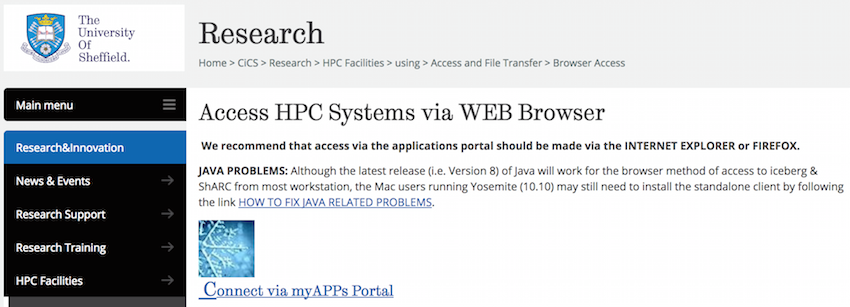

There, click on Connect via myApps Portal, which should open a login site in a new browser tab:

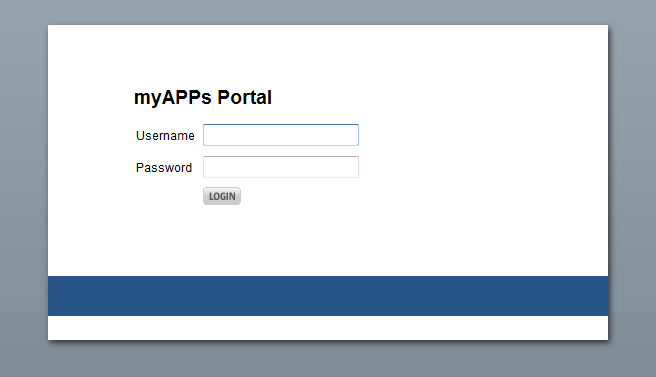

Insert the username/login name and password that you have been given by the administrators of the workshop.

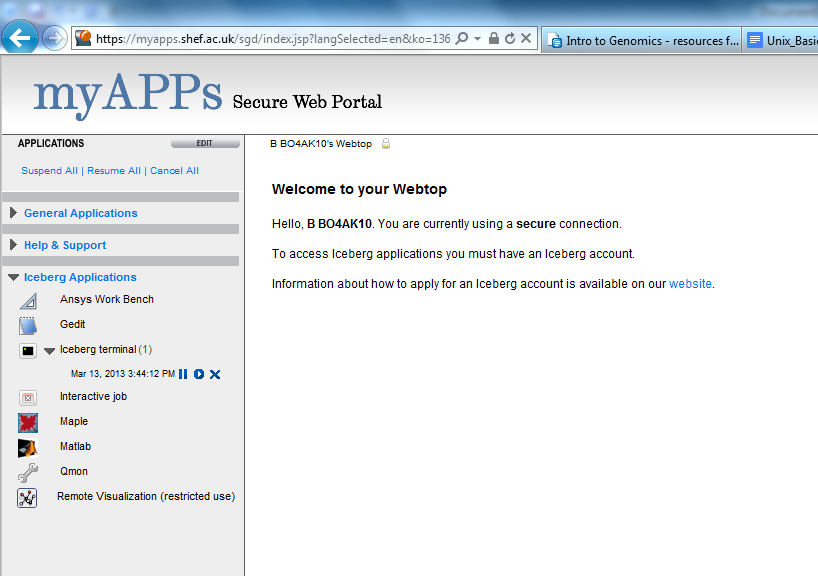

After you log in for the first time you may see a Java warning. Click on 'Activate Java', choose 'Allow and remember' from the drop-down menu, click 'Ok'.

A pop-up box will prompt you to run the Oracle Secure Global Desktop Client, then click 'Yes' whan asked if you wish to connect to the server.

Under Iceberg Applications select Iceberg terminal.

A new window will pop up.

Iceberg access for Mac and Linux users

Open a terminal, then type at the command line prompt:

$ ssh -X your_username@iceberg.shef.ac.uk

You will be asked for your iceberg password and at the first time it usually issues a warning about accessing an untrusted server. Just confirm that you want to add iceberg to your trusted server connections. The -X switch opens a connection with X11 forwarding. If you don’t intend to open a GUI on iceberg, skip that switch.

Some basics

On the head node called iceberg1 or iceberg-login1 start a new session on one of the worker nodes by typing:

$ qsh

Please be aware that no work should ever be done on the head node called iceberg1 or iceberg-login1 ! This node is just a gateway to the worker nodes. You can see the name of the node you are on in your command line prompt.

If you’ve logged in via the web browser or from a Unix/Linux terminal window via ssh -X and used qsh (instead of qrsh) to start a new session, then you can open a programme that uses a graphical user interface (GUI), e. g. firefox. Try it out now!

$ firefox

As a side note: if you had logged in via a programme called PuTTY, you would not be able to open graphical user interfaces remotely without installing further programmes (e. g. Exceed).

So where are you now in the file system?

$ pwd

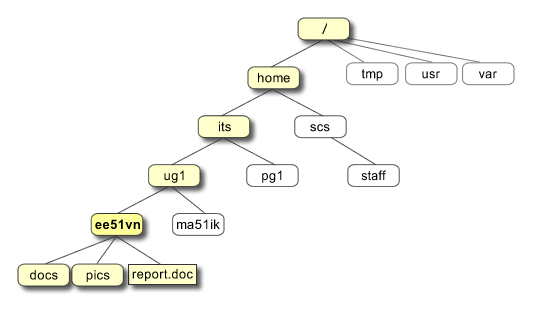

Here is a visual representation of a Unix file system:

taken from the Unix Tutorial for Beginners

Every Unix operating system has a root folder simply called /. Let’s see what’s in it using the command to list information about files. Please note that the command uses the lower case letter 'l', not the number '1':

$ ls /

List the files in the current directory, i. e. your home directory:

$ ls

Your home directory is still empty, or is it?

$ ls -a

The -a switch makes ls show hidden files, which start with a dot in their file name.

Let’s create a new directory and a new empty file.

$ mkdir NGS_workshop $ ls $ cd NGS_workshop $ ls $ touch test $ ls -l $ cd ..

The list output of ls prints out a lot of information about each file and directory.

drwxr-xr-x 4 cliff user 1024 Jun 18 09:40 directory_name -rw-r--r-- 1 cliff user 767392 Jun 6 14:28 file_name ^ ^ ^ ^ ^ ^ ^ ^ ^ ^ ^ | | | | | | | | | | | | | | | | owner group size date time name | | | | number of links to file or directory contents | | | permissions for world | | permissions for members of group | permissions for owner of file: r = read, w = write, x = execute -=no permission type of file: - = normal file, d=directory, l = symbolic link, and others...

$ ls -lF

Note the forward slash at the end of file names, when you use the -F option. This indicates a directory.

$ ls -lh

How can I look up the manual for the ls command and most other Unix commands?

$ man ls

What does the -h switch to ls do? Look it up in the manual using the following keys. Typing 'q' will close the manual page and return you to the command prompt.

| Keyboard | What it does |

|---|---|

| f or Space | one screen size down |

| b | one screen size up |

| d | half a screen size down |

| u | half a screen size up |

| G | jump to end of file |

| g | jump to beginning of file |

| h | get help |

| q | exit help |

| q | exit less |

Less is an excellent text viewer. It will only read as many lines from the input file as it can fit onto the terminal screen. That means you can have a look at say a 100Gb large file, even if you have only say 4Gb of memory on your motherboard. Text editors like nano, vim, emacs on the other hand will first read into memory the whole file. Unix built-in command line programmes generally work on a line basis, i. e. reading in one line of input at a time and doing their work with it. That means Unix command line programmes work on very large input files with a very low memory footprint.

Now, save yourself some typing (use the lower case letter 'l' and not the digit '1'):

$ alias ll='ls -lFh' $ ll

However, this neat little shortcut is only active in the current terminal window. In order to create this alias each time you log into a remote machine like iceberg or open a new terminal window on your local computer, add the above alias command line to your .bash_profile file:

$ nano .bash_profile

Note, on Macs this file is called .profile.

The dot at the beginning is part of the file name, so don’t forget it. Files whose names start with a dot are hidden files. At the bottom of the file add:

alias ll='ls -lFh'

hit Ctrl + o and Enter on your keyboard to save changes, then Ctrl + x to exit nano. As a side note, the reason why we are using nano here is not because it is a good general purpose text editor, but because it is the most simple text editor on Unix. The two best text editors are Vim and Emacs. Both are NOT easy to get started with, but both are extremely well documented and there are many tutorials online. Eventually you should learn how to use one of them. Why, you might ask, spending time on learning how to use an arcane text editor?! One reason is speed of editing. The other is the host of features you will want when you are spending a lot of your days programming, e. g. showing diff's of different versions of your code. Anyway, you can do simple text editing with nano and that's all we need now.

Now, exit iceberg by typing:

$ exit

… to quit the interactive session and get back to the head node iceberg1 and:

$ exit

… to log off the cluster.

Gearing up for work with files and directories

Log back into iceberg and start an interactive session with qsh.

First we want to create a new directory within the directory NGS_workshop. Start by making it the new working directory:

$ cd ~/NGS_workshop

Note this is equivalent to:

$ cd /home/your_username/NGS_workshop

... which specifies the full and absolute path to the directory starting from the root directory /. If you had left out the leading forward slash, you would have asked for a relative path, that means a subdirectory of your current working directory and Unix would not have found this path and complained about it. Try it out!

$ mkdir output $ ll

Change into the new directory:

$ cd output

Note how your command line prompt has changed.

$ ll

Create five new empty files:

$ touch test test1 test12 test123 Test This_is_a_really_long_file_name_isnt_it $ ll

Note: Spaces are important for Unix to parse the command line (but there is no difference between one and many spaces). So replace them with underscores in your file names. Generally, you can safely use the characters [a-zA-Z0-9._] in your file names.

Troubleshooting: If you are working on a Mac, then chances are that you are not seeing the file "Test" (starting with an uppercase letter). That is because Macs (at least recently) are shipped with a file system that is not case-sensitive, just case-aware (see this stackexchange discussion for more details). That means on such a filesystem the command touch cannot distinguish between the file names "test" and "Test".

Bash (short for Bourne Again Shell), the programme that provides the command line interface to Unix that you are currently using, comes with so-called wildcards:

$ ll test* $ ll T*

Two things to note here:

- The asterisk stands for anything, including nothing and

- Unix is case sensitive (if you have the file system supporting it)

$ ll test? $ ll *_*

Copy, move and remove files

$ cp test* .. $ ll ..

.. (i. e. two consecutive dots) stands for the parent directory.

$ ll ../../.. $ cd .. $ ll $ rm test

Note, the rm command deletes the file (and with the -r switch also directories). It doesn’t move them into a “trash can”, in case you have second thoughts. It also, by default, doesn’t ask you for confirmation.

$ cp output/test* .

Note the dot at the end of the last command line. It’s short for the current working directory or “here”.

$ ll $ cd output $ echo haha

echo prints its parameters ("haha") to standard output, which is the terminal screen.

$ echo haha > test1 $ echo hihi > test2

The redirection operator > redirects the output of the echo command into the file test1. Otherwise, echo prints to STDOUT, i. e. the terminal screen. Let's verify that by printing the content of both files to the screen:

$ cat test1 test2

Notice, that the cat command has printed the contents of test2 right after the contents of test1. In other words it has concatenated the two files.

$ echo hohoho >> test1 $ cat test1

The >> operator appends the output of echo to the end of the file test1.

$ cat test1 $ echo "hoohooo" > test1 $ cat test1

The text "haha" that we previously stored in the file with name test1 has disappeared! Redirecting the output of a command into an existing file overwrites it without notice. Remember this and be careful!

$ cp ../test12 test1

We are copying the file test12 from the parent directory into the current directory and save it as test1. Let’s see what’s in test1 now.

$ cat test1

?!?! The cp command has overwritten the test1 file in the current directory with the content of the file test12 from the parental directory. This file was empty.

$ cat test2 $ mv ../test123 test2 $ cat test2

The mv command (which does cut and paste) has just done the same as the cp command. It has silently overwritten the file test2 in the current directory with the empty file test123.

Imagine these files contained your NGS data or an R script you were working on for a week! Yes, you would have had a backup, of course. Anyway, you should be sufficiently scared by now. Here’s how you make these three commands safer:

All three commands have a switch that causes them to prompt the user for confirmation before overwriting an existing file. It’s -i for all three commands. Check with:

$ man rm $ man cp $ man mv

We could always type rm -i, cp -i or mv -i , but that’s tedious. Instead we can make this the default behaviour of the three programmes by adding aliases into the .bash_profile file (as before with the alias for the ls command):

$ nano ~/.bash_profile

Then add to the end of the file:

alias rm=”rm -i” alias cp=”cp -i” alias mv=”mv -i”

Ctrl + o → Enter → Ctrl + x.

$ rm test*

It still removed without asking for confirmation. That is because we have to tell bash about the changes we just made to the .bash_profile file for these changes to take effect in the current terminal session:

$ source ~/.bash_profile $ touch test1 test2 test3 $ rm test?

For each question in the interactive prompt of the rm command, type y and hit Enter.

Each time you start a new terminal session, bash will read your .bash_profile file. From now on, for every file you want to remove, the rm command will ask you for confirmation. Now, if that becomes too tedious, use the -f switch:

$ rm -f test*

which in this case will remove, without prompting for confirmation, all files (but not folders) in the current directory which start with "test".

Apart from the above three commands, you can generally expect all standard Unix text searching, filtering or manipulation commands (which you will mainly encounter in the advanced part of this tutorial) to leave the input file unchanged. They will read input, do their work with it and spit out the result onto the screen unless you are redirecting and thereby saving it into a new file. There is still one important thing you need to be aware of: never redirect output back into the input file. This will leave you with an empty input file!

Now, that you know what traps to avoid, you should not be afraid to experiment with these commands (which you will learn about soon), since they will not manipulate your precious input files.

Simple file renaming and TAB-completion

$ ll

When you type the next command line, instead of typing in the "really long file name", type "Th", then hit TAB (TAB - the button left of Q on your keyboard). bash should complete the rest of the file name.

$ mv This_is_a_really_long_file_name_isnt_it shorter_file_name.txt $ ll $ man mv

You see that the mv command can also be used to rename single files. Later we will see how to rename lots of files with one command line.

Use TAB-completion as often as you can! It will save you a lot of unnecessary typing and correcting typo's.

Accessing the command history

If you don’t want to keep retyping things you have already entered before:

| Up-arrow | scroll through previous commands |

View your command line history:

$ history | less

The command line history can get long and would flood your screen with hundreds of lines of output. So we pipe the output of the history command into the text viewer less. With the | operator you can pipe the output of one command into another command and thus glue many commands together into a pipeline. Later in the advanced part of this tutorial, you will see what a powerful feature that is.

Command line shortcuts

| Keyboard | what it does |

|---|---|

| Ctrl + a | jump to beginning of command line |

| Ctrl + e | jump to end of command line |

| Ctrl + w | delete word left of cursor |

| Ctrl + u | delete everything left of cursor |

| Ctrl + r | search for a command line in your history |

$ sleep 600

If you don't want to wait 10 minutes:

| Ctrl + c | kill the foreground programme and get the command line prompt back |

Tidying up

$ cd .. $ rm -R output $ rm test*

Gearing up for work with data and programmes

Let’s copy the example data files for the rest of this basic Unix module and the advanced Unix module into your newly created directory NGS_workshop.

If you have access to iceberg

When you type the following command line, try using TAB-completion to save typing and avoid spelling errors.

$ mkdir -p ~/NGS_workshop/Unix_module $ cp -Rv /usr/local/extras/Genomics/Example_data/Unix_module/NGS_workshop/* ~/NGS_workshop/Unix_module

$ ll Unix_module $ du -hs ~/NGS_workshop

If you don't have have access to iceberg

Download the the example data from Openwetware with wget:

$ mkdir -p ~/NGS_workshop/Unix_module $ cd ~/NGS_workshop/Unix_module $ wget --no-check-certificate -O Unix_module_example_data.tar.gz https://tinyurl.com/y7t6qz2n $ tar xzf Unix_module_example_data.tar.gz $ ll

You will probably want to copy/paste the wget command into your Iceberg terminal. To do this, first mark the command line with the mouse, then press Ctrl + c (or Cmd + c on a Mac), then make the Iceberg terminal window active and press the central mouse button, i. e. the scrolling wheel. On a Mac you would not press the central mouse button, but instead just Cmd + v and on Linux Shift + Ctrl + v.

TASK 1: How do I do a local install of a suite of programmes that come as C++ source code?

A local install of a programme does not require administrator privileges ($ sudo su) and installs programmes, libraries and documentation in your home folder or any other folder you have write privileges for. In your home directory type:

$ cd $ ll -a

The first folder at the top stands for the directory you are currently in, ./ . At the left you can see that you have read and write access for this directory. For more on permissions, see here.

$ mkdir prog src

Let’s install samtools.

$ cd src $ wget --no-check-certificate -O Samtools-1.3.tar.bz2 https://tinyurl.com/ybg7sol4

If you didn't know the exact download link you could download the programme from a web browser. In order to open a GUI on Iceberg, X11 (i. e. graphics) forwarding must be enabled and work (unfortunately it doesn’t with PuTTY). If you can, log into iceberg via the web browser or with ssh -X from a terminal window as described above and on the iceberg head node iceberg1, type:

$ qsh

instead of qrsh. Then firefox will open remotely.

$ firefox

In the new firefox window, you could then go to the samtools download page and download the latest source code into your new src directory. But don't do this now. You should have already downloaded samtools with the above command.

The samtools source code you just downloaded was packed into a tar archive and compressed with bzip2. First we need to uncompress, then unpack the tar archive. Then we move into the new folder that was created and have a look at the installation instruction.

$ tar -jxf Samtools-1.3.tar.bz2 $ ll $ cd samtools-1.3 $ less INSTALL

The compilation of the C++ code just requires the make command:

$ ll $ ./configure CC=/usr/bin/gcc CPP=/usr/bin/cpp $ make $ ll

If all went well, you should find new executable files in the samtools folder. If it didn't, then you might not have a C++ compiler installed. If you are working on a Mac, get the Xcode developer tools installed. Linux usually ships with the open source C++ compiler gcc. Now, copy the executable into the folder prog in your home directory.

$ cp samtools ~/prog

Unix automatically expands the tilde to the path of your home directory.

The manual for samtools is in the file called samtools.1. Let's create a folder for manuals in your home directory and put the samtools manual in there.

$ mkdir -p ~/man/man1 $ cp samtools.1 ~/man/man1 $ ll ~/man/man1

Now let's try to call samtools:

$ samtools

Unix can’t find a programme called samtools, but where does it actually look for programmes? Here:

$ echo $PATH

The PATH is a so-called environment variable of bash. In case you were wondering about the $ that appears here in front of PATH. It’s part of the bash syntax and simply means “give me the contents of that variable”. To see all current environment variables and what they contain type:

$ env

$ ./samtools

aaah, why does this work? Because you have specified the path to the executable. ./ stands for the current directory, remember? You could also have typed:

$ /home/your_username/src/samtools-1.3/samtools $ pwd

If you want to execute samtools from any directory without having to specify the whole path to its location in the file system, then simply add the folder where you store your executables to the beginning of the PATH environment variable:

$ ll ~/prog $ export PATH="~/prog:$PATH" $ echo $PATH

Note, that the path ~/prog now appears at the beginning of your PATH environment variable.

Now type:

$ samtools $ which samtools

Change to any other directory and call samtools. It should still work. Now, if you log out of the interactive session and back into another with qsh, you’ll see that your changes to the PATH variable have been lost. To make them permanent, add them to your ~/.bash_profile file as you did earlier for the aliases.

$ nano ~/.bash_profile

At the end of the file, enter the same command line you used before at the command prompt:

export PATH=~/prog:$PATH

Ctrl + o → Enter → Ctrl + x.

$ source ~/.bash_profile $ echo $PATH $ samtools

Since your personal .bash_profile file is read every time you open a terminal session, your custom addition to the PATH will be read each time. Do the analogous thing for the MANPATH variable, i. e.:

export MANPATH=~/man:$MANPATH

$ source ~/.bash_profile $ man samtools

TASK 2: My NGS library has finally been sequenced and my sequencing centre has informed me that the sequence data files are on one of their password protected servers ready for download. How do I get those many Gb large files into my iceberg account (or any other Unix system)?

The file storage limit in your home directory on iceberg is only 10Gb, but you have 100Gb available under /data/your_username. You can find more about file storage on Iceberg here.

Downloading sequence data for this workshop

If you have access to iceberg

$ quota $ cd /data/your_username $ mkdir raw_data

Any long lasting or compute intensive jobs on iceberg have to be submitted with the qsub command and a job submission script, as we do now:

$ nano ~/NGS_workshop/Unix_module/02_TASK/download.sh

Substitute my email address with yours, of course:

#!/bin/bash #$ -l h_rt=00:05:00 #$ -l mem=500M #$ -m be #$ -M c.kerth[ at ]sheffield.ac.uk # change to the directory where the data should be stored cd /data/$USER/raw_data # wget command line wget -c --no-check-certificate -O Unix_tut_sequences.fastq.gz https://tinyurl.com/ybfmoxqd

Then submit this job submission script to the SGE scheduler with the qsub command:

$ qsub ~/NGS_workshop/Unix_module/02_TASK/download.sh $ Qstat

You could now log off iceberg. Your job will be run on the next node available. It might take a few minutes for your job to be assigned a compute node. Check your emails and the raw_data directory! Further details on job submission on iceberg can be found here.

If you don't have access to iceberg

You can test downloading sequence data to any Unix system without a job scheduler like SGE with the following:

$ mkdir raw_data $ cd raw_data $ wget -c --no-check-certificate -O Unix_tut_sequences.fastq.gz https://tinyurl.com/ybfmoxqd

Once downloaded have a look at the file:

$ zless Unix_tut_sequences.fastq.gz

This is a version of less that can take a gzipped file as input. There is also zcat.

Recipe for your own sequence data

This section describes what you should do to download your own sequence data once it's ready. Go to the next section to continue the tutorial.

On your local computer follow the link to the server with the sequence data and log in.

Export the cookie for this site (in Firefox you’ll need to install the add-on Cookie-Exporter).

Use a programme like WinSCP for Windows or Cyberduck for Mac to upload that cookie file from your local computer into your Iceberg account (see here for more info on that).

On Mac and Linux you can also do this with command line tools like scp or rsync, e. g.:

$ rsync -av -e "ssh -l bop08ck" ~/Downloads/MiSeq_cookie.txt iceberg.shef.ac.uk:/data/bop08ck/raw_data

my username

cookie file

server:path_to_data_directory

Then from your /data/username/raw_data directory on iceberg issue the following:

$ wget --load-cookies MiSeq_cookie.txt web_link_to_your_sequence_file

Downloading say 100Gb of sequence data can take several hours, while wget gives you progress report to the terminal output. So you wouldn’t be able to log off or continue to do some other work during the current interactive session. So stop the download with Ctrl + c. You could then issue:

$ wget -c --load-cookies MiSeq_cookie.txt web_link_to_your_sequence_file 2>/dev/null &

The -c switch to the wget command will continue the download from where you stopped it. This also sends the process into the background by means of the ampersand & at the end and gives you the command prompt back. But you can’t log off iceberg until the job is finished. Logging off would terminate the download process. If you want to be able to log off while a job is running, put the command line into a text file. On the first line of this text file put #!/bin/bash. Then submit the command as a job to the Sun Grid Engine on iceberg with qsub textfile. This is iceberg-specific. If you were logged into a normal Linux compute server (without a job scheduling system like Sun Grid Engine) you could simply add nohup to the beginning of the command line:

$ nohup wget -c --load-cookies MiSeq_cookie.txt web_link_to_your_sequence_file 2>/dev/null &

and then log off without terminating the download. This works with any command.

How can I find files and directories in Unix?

$ cd ~/NGS_workshop $ ll

Let’s search for a file or directory with the word “stacks” in its name:

$ find . -name "*stacks*"

Here, the find command searches from the current directory recursively in all subdirectories for files and folders which contain “stacks” anywhere in their name. The double quotes around the pattern *stacks* are there to prevent bash from interpreting it first and possibly expanding it into existing file names, which is not what we want. So the quotes make sure that find will always get the pattern we specified.

$ find ~ -name "*stacks*"

This would be searching in your whole home directory. You can do much more with the find command. For instance, take a look here. But what if you don’t have any idea about the name of the file you are looking for, but you know it contains a certain word, i. e. how can you do the search on the contents of the files instead of their file names? Let’s say you remember that the file you are looking for contains the word “consensus” and you are not sure about upper/lower cases.

$ grep -Ri "consensus" *

This should print out the file name in which it found a match, followed by a colon and the full line in which the match occurred, usually also highlighting the matching part. We are using grep to search within files. The -R switch tells grep to look recursively in all subdirectories. The -i switch tells it to ignore the difference between upper and lower case. The search pattern is provided in quotation marks and the asterisk at the end is a wildcard that is expanded by bash into all files and folders in the current directory. So grep will search in all files in the current directory and also in all files located in all subdirectories (and subsubdirectories for that matter). But what if there were really many files it would have to scan and if that would take a really long time. What can help is if you could restrict the search somehow to only a subset of files. Let's assume you guessed the file you are looking for had the extension tsv, because it contains "tab separated values". Then the following command line should help you out:

$ grep -Ri "consensus" $(find . -name "*.tsv")

Anything between $() will first be executed by bash and replaced by its output. Here that means the find command line will be executed first and its output, i. e. the file names it found, will be the arguments to the grep command. Instead of $() you will often find backticks `. So the last command line is equivalent to the following command line:

$ grep -Ri "consensus" `find . -name "*.tsv"`

Note, here find finds only one .tsv file, so grep does not prepend the file name to the output, because it only got one file as input. For more details, as usual read the manual:

$ man grep

Recommended for further self-study

Butlin:Unix for Bioinformatics - advanced tutorial

Unix & Perl Primer for Biologists

Endnote

Any contribution to this tutorial, however small, is highly appreciated. So if you can, please correct obvious mistakes or if you don't know how to correct the error or if you just want to suggest an improvement, please mention it on the talk page associated with this page. You will need to get an OpenWetWare account before you can edit pages. Many thanks.